Topics: Facebook Content Moderation Social Media

Introduction

Social media platforms have developed into effective communication tools in the current digital era, bringing people together throughout the world. With more than 2 billion monthly active users, Facebook is one of these platforms with the most influence. Facebook has been struggling with content moderation, a challenging problem since with tremendous power comes great responsibility. In this blog article, we’ll delve into the complicated world of content moderation difficulties on Facebook and discuss how tough it is to strike a balance between the values of free expression and harm reduction.

The meaning of content moderation

Monitoring, assessing, and, when required, eliminating user-generated information that contravenes a platform’s community norms or policies is known as content moderation. This requires analyzing enormous amounts of data from Facebook, including text, photographs, videos, and links. The objective is to establish a friendly and secure atmosphere while upholding users’ rights to free speech.

“We have a right to speak freely. We also have a right to life. When malicious disinformation—claims that are known to be both false and dangerous—can spread without restraint, these two values collide head-on.“ —George Monbiot

Facebook content moderation

To ensure that the social media platform’s material complies with community standards and norms, Facebook uses a multi-layered content moderation approach. First, Facebook heavily relies on machine learning and artificial intelligence algorithms that can automatically identify information that might be against the rules, and it continually makes research and development investments to enhance its situational knowledge. The second important factor in content moderation is human moderation, particularly for material dealing with complex situations and culturally delicate subjects. In order to fully cover multilingual and multicultural content, Facebook has expanded the number of its global content assessment staff. Facebook has also revised its content standards and guidelines, created clearer guidelines and appeals processes, and established an independent oversight committee to review material removal decisions in order to boost openness and accountability. (Translated by Content Engine LLC, 2021) Finally, partnerships and teamwork are important components in the fight against dangerous content. Facebook collaborates with external groups, such as fact-checking organizations and non-governmental organizations (NGOs), to collaboratively address issues like hate speech and false information while exchanging knowledge and best practices to continuously improve content moderation techniques. To ensure users have a great social media experience on the site, these controls are made to strike a balance between free speech and community safety.

The sheer volume of its information, the difficulty of contextual comprehension, political and cultural sensitivities, and striking a balance between free expression and harm avoidance are just a few of the enormous issues Facebook faces. Processing hundreds of millions of pieces of user-generated information every day and making sure it adheres to rules is a difficult undertaking, and while automated review methods are effective, they are prone to errors. Contextual comprehension is difficult for AI algorithms to grasp since context and nuance are difficult to distinguish between in sarcasm, humor, and damaging information. Decisions about content filtering are influenced by political and cultural variables, which can cause conflict and difficulties. Platforms must strike a balance between the rights to free speech and the need to stop the global dissemination of harmful content, which necessitates taking into account the perspectives of many nations and areas. Moral and ethical issues must be taken into account when deciding when to limit content and maintaining a balance between free expression and harm avoidance.

A detailed analysis of internet governance and content moderation on the social media platform using a particular Facebook case study is provided below.

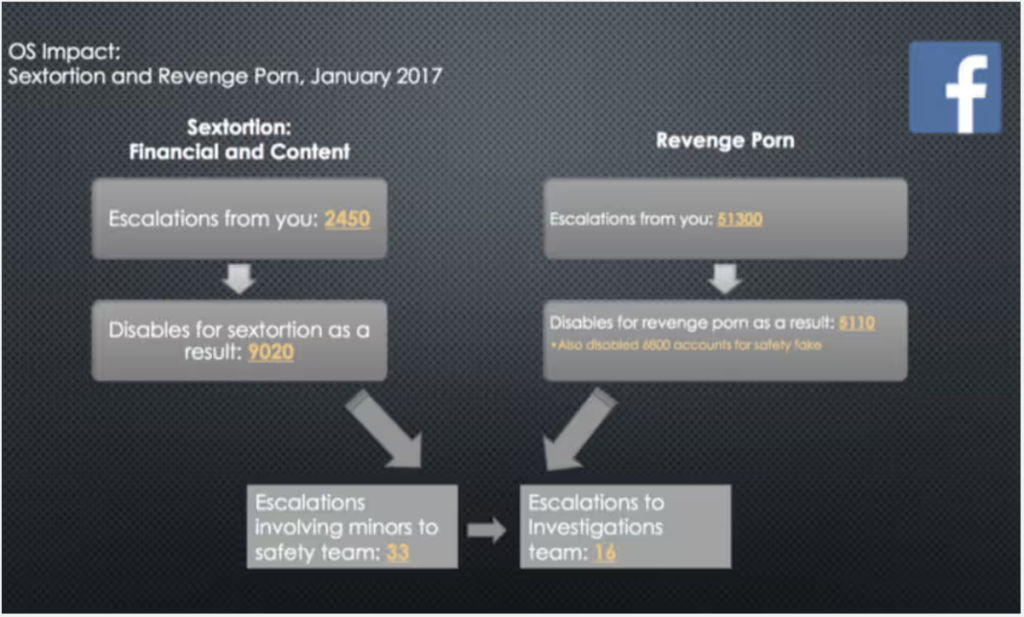

According to a leaked document, Facebook must evaluate roughly 54,000 potential instances of “sextortion” and revenge porn on the service within a month. According to information provided to staff, Facebook had to disable more than 14,000 accounts in January that were connected to this type of sexual assault, with 33 of the cases examined involving children. The records, which are part of Facebook’s pleadings and were obtained by the Guardian, reveal for the first time the specific guidelines the business has set to control the sexual content uploaded on the website as well as the scope of the task facing the moderators in charge of maintaining Facebook. (Hopkins & Solon, 2017)

In January, moderators warned senior managers about 51,300 potential instances of revenge porn, which is defined as attempts to utilize intimate photographs to degrade, defame, or retaliate against a person.

Facebook also upgraded 2,450 cases of sextortion, which it defines as attempts to extort money or other personal information from people. A total of 14,130 accounts were disabled as a result of this. The internal investigation team at Facebook took on 16 cases. (Hopkins & Solon, 2017)

When Donald Trump and his political allies circulated lies to cast doubt on the validity of the 2020 presidential election, culminating in the deadly assault on the U.S. Capitol, the scope and seriousness of the content moderation problem became very obvious. Trump’s account was then suspended by the majority of the top social media sites. (Nick, 2021) After promoting free speech and rejecting the position of “arbiters of truth” for so long, social media corporations now seem to be rethinking their approach to monitoring online dialogue (Columbia Law Review & Kadlubowska, 2021). The Holocaust denial was no longer permitted by Meta in 2020, and some white nationalist organizations were removed off Facebook (Monika, 2020)

“We make a lot of decisions that affect people’s ability to speak. […] Frankly, I don’t think we should be making so many important decisions about speech on our own either.“—Mark Zuckerberg

These choices are based on an ethical dilemma: Should false information be eliminated or punished in order to maintain free speech, or should it be protected even at the expense of allowing false information to propagate and endangering people? While choosing between taking action (such as removing a post) and not taking action (such as allowing the message to remain online), policymakers must choose between two goals (such as public health versus free expression) that are not mutually exclusive and cannot be upheld concurrently. These situations pose moral dilemmas: An agent must morally decide between one of the two possibilities, not both at once. (Sinnott-Armstrong, 1988)

Content restriction is contentious and has significant effects. Regulators are reluctant to enforce limitations on illegal but harmful content, such false information, and instead leave it up to platforms to decide what is allowed and what is not. The core of the governmental approach to the regulation of internet material is the trade-off between essential principles like free expression and the protection of the public health. When we examined which parts of the content moderation conundrum influence people’s choices concerning these trade-offs and the role of personal attitudes on these decisions, we discovered that respondents’ willingness to delete a post or suspend an account rose with the seriousness of the repercussions. In the final analysis, the degree of injury, frequency of offense, and nature of the content were more significant than the qualities of the individual disseminating false information.

In conclusion

Given its enormous user base and the changing nature of online communication, Facebook confronts tremendous issues with content control. Facebook is actively attempting to address these issues through artificial intelligence, human review, openness, and partnerships. Balancing free speech with harm prevention is a persistent challenge.

In order to use social media properly, users like us also have a part to play. We should work to establish a digital environment that encourages healthy debate, respects opposing ideas, and ultimately produces a safer online place for everyone, even though platforms like Facebook have a responsibility to monitor content. Finding the ideal balance necessitates a group effort that will influence social media and online communication in the future.

References

Columbia Law Review, & Kadlubowska, M. (2021, April 23). GOVERNING ONLINE SPEECH: FROM “POSTS-AS-TRUMPS” TO PROPORTIONALITY AND PROBABILITY – Columbia Law Review. Columbia Law Review; Columbia Law Review. https://columbialawreview.org/content/governing-online-speech-from-posts-as-trumps-to-proportionality-and-probability/

George, M. (2021, January 27). Covid lies cost lives – we have a duty to clamp down on them | George Monbiot. The Guardian. https://www.theguardian.com/commentisfree/2021/jan/27/covid-lies-cost-lives-right-clamp-down-misinformation

Hopkins, N., & Solon, O. (2017, May 22). Facebook flooded with “sextortion” and “revenge porn”, files reveal. The Guardian. https://www.theguardian.com/news/2017/may/22/facebook-flooded-with-sextortion-and-revenge-porn-files-reveal?CMP=Share_iOSApp_Other

Imagga. (2020, June 26). Imagga – Who needs Content Moderation? Facebook. Www.facebook.com. https://www.facebook.com/imagga/photos/a.10151433063662298/10158420764837298/?__cft__

Meta. (2019, October 17). Mark Zuckerberg Stands for Voice and Free Expression – About Facebook. About Facebook. https://about.fb.com/news/2019/10/mark-zuckerberg-stands-for-voice-and-free-expression/

Monika, B. (2020, October 12). Removing Holocaust Denial Content. About Facebook. https://about.fb.com/news/2020/10/removing-holocaust-denial-content/

Nick, C. (2021, June 4). In Response to Oversight Board, Trump Suspended for Two Years; Will Only Be Reinstated if Conditions Permit. About Facebook. https://about.fb.com/news/2021/06/facebook-response-to-oversight-board-recommendations-trump/

Roberts, S., Hopkins, N., Maynard, P., & Ochagavia, E. (2017, May 21). The Facebook Files: sex, violence and hate speech – video explainer. The Guardian. https://www.theguardian.com/news/video/2017/may/21/the-facebook-files-sex-violence-and-hate-speech-video-explainer

Sinnott-Armstrong, W. (1988). Moral Dilemmas. Philpapers.org. https://philpapers.org/rec/SINMD

Translated by Content Engine LLC. (2021, October 21). Facebook board to review celebrity content moderation rules. Login.ezproxy.library.sydney.edu.au. http://ezproxy.library.usyd.edu.au/login?url=https://www.proquest.com/wire-feeds/facebook-board-review-celebrity-content/docview/2584642485/se-2?accountid=14757

Zia, M. (209 C.E., January 1). New Report Reveals: Facebook’s Content Moderation Relies on Highly Inaccurate Methods. Blogspot.com. https://3.bp.blogspot.com/-HAndgH_rRaQ/XCukSc8aggI/AAAAAAABuFM/s5wu2vUTlV08hS-aWhILVUeXCqYMz5HrgCLcBGAs/s1600/facebook-news.jpg