Topic: Content Moderation,”Harmful” content, Twitter, Facebook, mental health, self-regulation, multiple roles, Cooperation with government, Splinternet, challenge

More Content Moderation Is Not Always Better © 2021 by San Whitney: Getty Images is licensed under CC BY-ND 4.0

Abstract

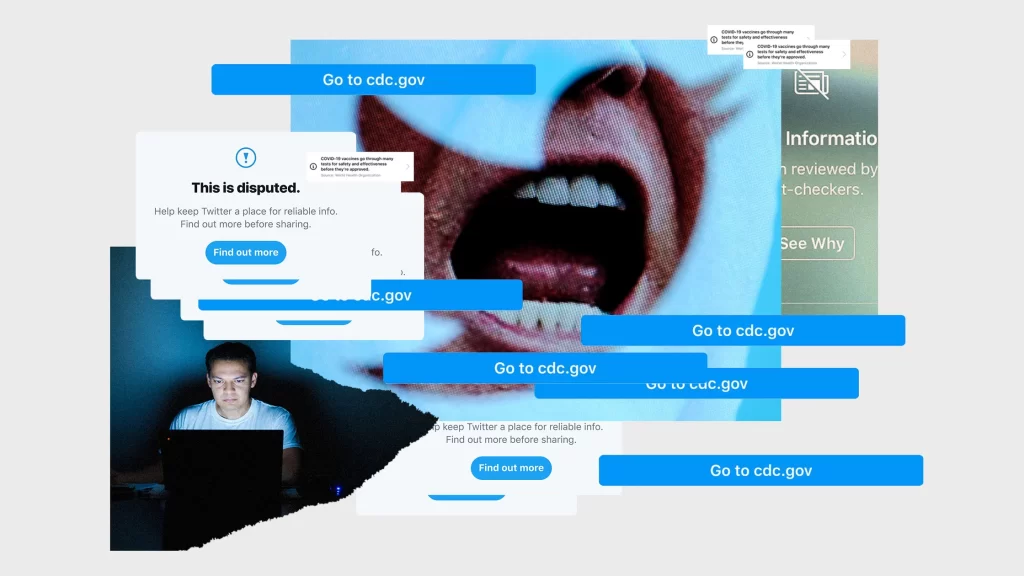

This article examines the challenges and problems posed by the free flow of content on the Internet, with a particular emphasis on the far-reaching impact of harmful content on individual users (especially teenagers) and society. By analyzing and demonstrating the growth of harmful content on various online platforms (e.g., Twitter), the article sheds light on the magnitude of the problem and explores its potential impact on distortions of individual values, damage to psychological well-being, and social and real-world implications.

State of Internet

The Internet initially seen a utopia the free of information, content could shared through medium of “Internet”, thus a space equality and sharing.(Gillespie, 2018) Over however, problems to emerge, as pornography, obscenity and Illegal content began to as it in size influence. Twitter, for example, has seen an overall increase in the amount of “harmful” content posted by its users between January and June 2021, with violent images and adult content reaching 131 per cent of the total.(Rules Enforcement – Twitter Transparency Center, 2022) Twitter’s data further demonstrates the state of the internet, which is contrary to its original purpose and continues to pose a challenge.

The emergence of a large amount of harmful content has a profound impact on individual users and on society, both in the real world and online.

At the individual user level, exposure to harmful content can lead to distorted values and mental health problems, especially for children and adolescents, a special population.

Distortion of values:

The content of the Internet, as the main access to information, influences users’ judgement. When users are exposed to extreme, biased and similar information over a long period of time, their perceptions and values may be skewed. They may find it difficult to distinguish between the real world and the online world, and thus become dependent on the wrong views and behaviours on the Internet.

For teenagers, prolonged exposure to violent and illegal content in cyberspace may confuse their moral judgement and make it difficult for them to distinguish between right and wrong, and they may imitate the wrong behaviours on the Internet. (Pérez et al., 2023)

Impaired mental health:

Constant fearful exposure to online content can lead to anxiety. For example, images from disasters or threatening content in reports of terrorist activities can lead to anxiety and depression.

Children, as a special population, are highly vulnerable to persecution. The U.S. National Institute of Psychology has found, after research, that children’s prolonged viewing of violent images on television can lead to a decreased sense of insecurity and distress toward others, and a risk of aggressive behavior toward others.(American Psychological Association,2013)

Violence in the media: Psychologists study potential harmful effects © 2013 by American psychological association is licensed under CC BY-ND 4.0

Socially, violent media and hate speech exacerbate social inequalities while leading to real-life violent attacks.

Exacerbating social inequalities:

Hate speech is a manifestation of “harmful” content on the Internet, usually directed at a particular race, gender, religion or other specific identity. Since the outbreak of covid-19, Chinese, Asians and Jews have been at the centre of this type of speech by being incorrectly labelled as the main transmitters of the disease. (Pérez, J. M. et al,2023) The spread of these negative discourses has further widened the rift in society, fuelling prejudice and antagonism between people.

The echo effect of Internet also to the spread of speech, thus prejudices, in society.

Violent attacks in real life:

People exposed to violent, hateful, content identify with a that triggers behavior in life.

The modern digital world is thus both complex and multifaceted. The persistence of “harmful” content has caused varying degrees of damage to both individuals and society. This makes people wonder: who is responsible for this “harmful” content? And who can stop the proliferation of such information? How can those responsible take action?

Individuals/creators are responsible for self-published content.

Again, take Twitter for example, which has clearly labelled the types of content that are not allowed to be published in its content publishing rules, which include violent speech, violence and hateful entities, sensitive media, child sexual exploitation, and so on.(Twitter’s Violent Speech Policy | Twitter Help, 2023) Creators are required to create their own content within these limits and publish it in accordance with Twitter’s online regulations. The digital media platform Twitter has set penalties for different types of violations. For example, if a user posts content that threatens violence or incites violence, Twitter may temporarily lock the account or permanently suspend it, depending on the severity of the content’s impact.(Twitter’s Violent Speech Policy | Twitter Help, 2023) Although the online world is a relatively free-speech space, people still need to take responsibility for the content they post.

Platforms need to be responsible for the content within them

Why are platforms responsible for “harmful” content?

Digital platforms are the main means of circulating “dangerous” content: unlike earlier forms of media, with the emergence and growth of the Internet, people have moved from being passive recipients to being active publishers and receivers..(McQuail, 2013) Social media platforms, as a product of this phenomenon, have facilitated the timeliness and frequency of audience communication. In addition, with the help of media platforms, everyone in modern society has the power to publish what they want to express. This has led to an influx of mixed messages, including “harmful” content. In order to protect the interests of other Internet users, platforms need to prevent the circulation of malicious content through technical means and community guidelines to ensure the safety of the public in the online space.(Flew et al.,2019)

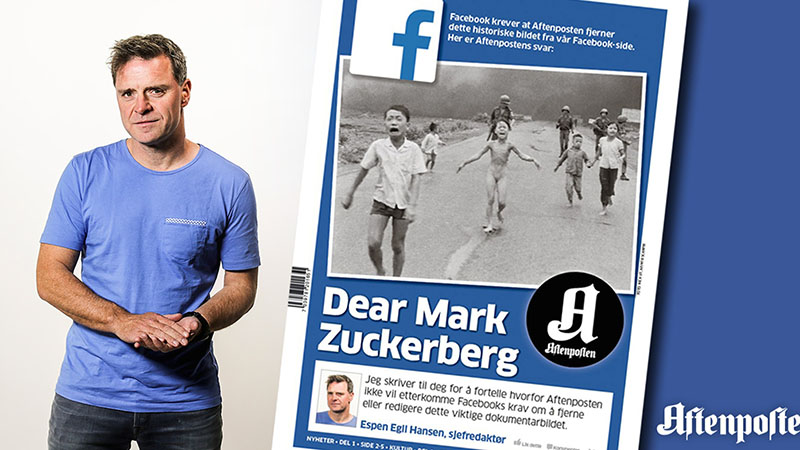

Facebook Now Allows Iconic ‘Napalm Girl’ Photo After Public Outrage © 2016 by Michael Zhang is licensed under CC BY-ND 4.0

How is the content of “harm” defined?

Defining content’s harm is challenging. Consider “The Terror of War” or “Napalm Girl” by Nick Ut. Upon release, its message was widely debated. In 2016, Norwegian journalist Tom Egeland used the photo in a war history article. Facebook removed it due to violence and underage nudity.(Gillespie, 2018) When Egeland reposted it criticizing Facebook, his account was blocked. Even as many, including the Norwegian prime minister, shared the story, Facebook maintained its removal stance due to the image’s violent and nude content. However, many people believe that Ut’s photographs are historically and emotionally significant. This reveals the diversity of people’s understandings and reactions to the same content, making it difficult for digital platforms to set clear red lines for content.

How can platforms be held accountable for this “harmful” content?

Although the harmfulness of content is difficult to identify, platforms need to introduce initiatives to censor content in order to stop it from being disseminated. Whether it is warnings or heavy fines, or action taken before or after a report is made, platforms need to have enforcement policies in place to deal with content.(Gillespie, 2018) Platforms need to make content moderation part of their operations to reduce the output of “harmful” content.

Modern media platforms achieve accountability for “harmful” content primarily through self-regulation and cooperation with communities and governments.

Self-regulation: Both major and minor media platforms require stringent content self- regulation. Facebook, a leading social media site, effectively manages the vast amount of daily content. To protect users, Facebook employs third-party agents and an independent board to address harmful content.(Medzini Rotem, 2021) Specifically, the Facebook Oversight Board reviews content disputes and offers policy advice.(Medzini Rotem, 2021)If a political post is taken down for breaching guidelines, users can appeal. The board then evaluates the removal, confirming if it breached rules, and decides on content reinstatement.

Deals with challenge of areas: Though platforms might aim to simplify content censorship, some content can’t be clearly labeled as “allowed” or “prohibited”. For instance, content with historical and educational significance might still be deemed offensive.

Controversial decision-making: When platforms remove content with social or historical value, they may face criticism and opposition from the public. For example, Facebook’s handling of the “Napalm Girl” incident.(Gillespie,2018)

Working with and governments: Social media’s vision today aims to balance freedom of expression with content regulation by collaborating with governments and communities. For instance, Facebook, known for its openness, actively listens to its community.(Gillespie,2018)

Problems with working with communities and governments:

Government censorship: Governments might request content removal, raising concerns about online transparency and freedom of expression.

Questioning impartiality: Governments might use social media to sway public opinion for political aims. For instance, former US President Donald Trump influenced voters via targeted Facebook ads and campaigns.(Scott,2018)

The Challenges of the Splinternet for Content Auditing:

Different countries’ policies have led to the “Splinternet,” a segmented Internet. However, content restrictions in certain areas make content auditing exceptionally challenging.( Lemley, M. A., 2021)

Different censorship:The Internet’s fragmentation means different countries or regions have unique content censorship standards. This complexity challenges global platforms in creating a unified censorship approach due to diverse regulations.

Technical challenges: To meet varying regional regulations, platforms might need multiple service versions. For instance, a social media platform might create a specific version for a country where certain content is altered or blocked.

Reference List:

American Psychological Association. (2013). Violence in the media: Psychologists study potential harmful effects. Apa.org; American Psychological Association. https://www.apa.org/topics/video-games/violence-harmful-effects

Flew, T., Martin, F., & Suzor, N. (2019). Internet regulation as media policy: Rethinking the question of digital communication platform governance. Journal of Digital Media & Policy, 10(1), 33–50. https://doi.org/10.1386/jdmp.10.1.33_1

Gillespie, T. (2018). CHAPTER 1. All Platforms Moderate. In Custodians of the Internet: Platforms, Content Moderation, and the Hidden Decisions That Shape Social Media (pp. 1-23). New Haven: Yale University Press. https://doi.org/10.12987/9780300235029-001

Lemley, M. A. (2021). THE SPLINTERNET. Duke Law Journal, 70(6), 1397+.

https://link.gale.com/apps/doc/A666103930/AONE?u=usyd&sid=bookmark- AONE&xid=86275a95

Katz, A. (2017). Scenes From the Deadly Unrest in Charlottesville. TIME.com; TIME. https://time.com/charlottesville-white-nationalist-rally-clashes/

Massanari, A. (2017). Gamergate and The Fappening: How Reddit’s algorithm, governance, and culture support toxic technocultures. New Media & Society, 19(3), 329–346. https://doi.org/10.1177/1461444815608807

McQuail, D. (2013). The Media Audience: A Brief Biography-Stages of Growth or Paradigm Change? The Communication Review (Yverdon, Switzerland), 16(1-2), 9–

20. https://doi.org/10.1080/10714421.2013.757170

Medzini, Rotem. “Enhanced Self-Regulation: The Case of Facebook’s Content Governance.” New Media & Society, vol. 24, no. 10, 1 Feb. 2021, p.

146144482198935, https://doi.org/10.1177/1461444821989352.

Pérez, J. M., Luque, F. M., Zayat, D., Kondratzky, M., Moro, A., Serrati, P. S., Zajac, J., Miguel, P., Debandi, N., Gravano, A., & Cotik, V. (2023). Assessing the Impact of Contextual Information in Hate Speech Detection. IEEE Access, 11, 30575–30590. https://doi.org/10.1109/ACCESS.2023.3258973

Rules Enforcement – Twitter Transparency Center. (2022, January 11). Transparency.twitter.com.

https://transparency.twitter.com/en/reports/rules-enforc

Twitter’s Violent Speech Policy | Twitter Help. (2023, June). Help.twitter.com. https://help.twitter.com/en/rules-and-policies/violent-speech