Featured Image Licenses: “Instagram” by Solen Feyissa is licensed under CC BY-SA 2.0. and “Instagram logo on gradient header” by SmedersInternet is licensed under CC BY 2.0.

At first glance, Instagram appears to be an all-in-one platform for users, offering everything from entertainment and creation to open expression and making money (Wilding et al., n.d.). Yet, many users are dissatisfied with how Instagram manages its community guidelines and have filed numerous complaints for better monitoring. So, is Instagram’s diverse platform really what it seems to be?

Introduction

As Instagram usage continues to expand, the issues surrounding its community guidelines and content moderation practices have become more sophisticated. Particularly, deciding on what content can or should be permitted on their sites (Gillespie, 2018). With 2.35 billion active users worldwide, ranking as the fourth-largest social media platform (Ruby, 2023), Instagram has evolved from a simple communication tool into a dynamic venue for information sharing, public discourse, and social engagement (Gillespie, 2018). However, recent criticisms have brought attention to Instagram’s struggles in effectively implementing community guidelines and content moderation strategies, specifically in addressing issues like hate speech and discrimination (Are, 2023). These challenges underscore the tough balance between free expression and the need to maintain a safe and courteous online community. While Instagram’s community guidelines urge care for others and respect for others’ viewpoints, the trouble comes in the complexity of managing these topics (ACMA, 2022; Instagram, 2023). It is clear that more extensive and proactive measures are needed to ensure a safer and more responsible online environment. In this article, I will examine hate speech and discrimination on Instagram, investigating the efficacy of the platform’s community rules and why these problems persist even with Instagram’s policies.

“Group of diverse people using smartphones” by Rawpixel.com is licensed under CC0 1.0.

Understanding Instagram’s Community Guidelines

Instagram’s (2023) community guidelines are a set of laws that construct the framework for ethical and respectful online conduct. They include an extensive list of standards and requirements that users must follow when engaging in or using the platform. These policies address forbidden behaviour, such as unlawful conduct, hate speech, online harassment, and other acts that may result in account suspension or permanent removal (Roberts, 2019; Instagram, 2023). The ultimate goal is to keep the community as an authentic and safe forum for inspiration and self-expression (Instagram, 2023).

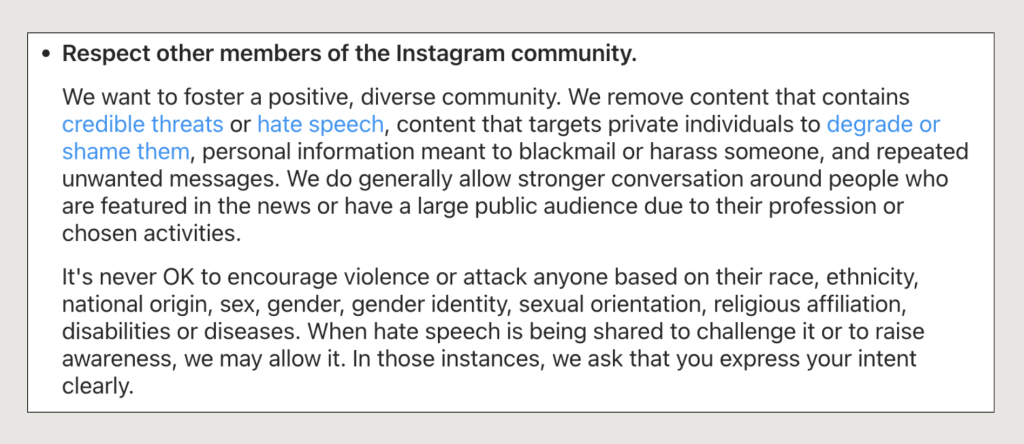

As shown in Figure 1, hate speech and discrimination are NOT allowed on the platform:

Figure 1. Instagram’s Community Guidelines on Hate Speech & Discrimination (Source: Instagram, 2023)

So, How Does Instagram Prevent These Issues?

To ensure that Instagram adheres to its terms of service, the platform uses several approaches to addressing these concerns:

- Comment filters, which allow users to filter out any hateful or disrespectful comments (Instagram, 2023);

- Artificial intelligence (AI) systems that are put in place to actively identify and delete any unlawful content (Hong et al., 2023) and;

- AI systems will forward content to human peer-review squads for closer investigation to determine whether the post should be removed or not (Instagram, 2023).

“How Does Instagram Moderate Content In 2023?” © 2023 by HowToUnleashed is licensed under CC BY 4.0

Therefore, when content such as posts, comments, or videos violates these policies (i.e. hate speech and discrimination), Instagram takes action via the indicated methods to foster a positive and respectful online environment (Roberts, 2019).

BUT! Instagram’s Regulations and Strategies are INEFFECTIVE.

Since the beginning, Instagram’s objective was unarguably to offer users a platform to share and create content about their interests, lifestyles, and beyond. It indeed serves as a great space for users to connect and express themselves (Maddox & Malson, 2020). However, the truth resides in Instagram’s (2023) Comments and Direct Messages (DMs) feature, which, despite its community guidelines and moderation measures, allows users to engage in discrimination and harm others (Are, 2023).

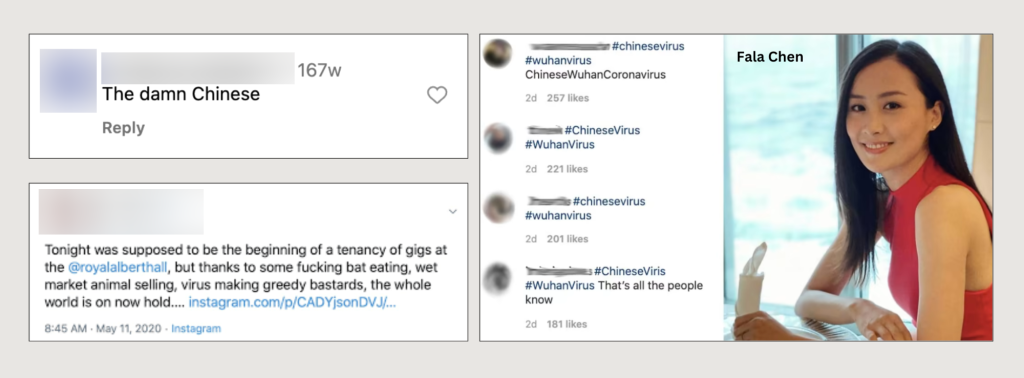

A prominent example is the serious case of Asian prejudice during the COVID-19 pandemic in 2020 (Hong et al., 2023). As the pandemic swept the world, Instagram became a breeding ground for xenophobic behaviour, with 76% of hate crimes targeting Asian users, groups, and celebrities like Fala Chen (Figure 2) (Ziyi, 2020; Jang et al., 2023). These harmful and discriminatory remarks supported toxic stereotypes and made Asian individuals feel unsafe online (Croucher et al., 2020).

Other than that, Instagram is also well-known for its popular Hashtag (#) feature. In 2020, the hashtag #ChineseVirus was used on the platform over 68,000 times in regard to the new coronavirus, SARS-CoV-2 (Karakoulaki, 2021). Yet, as illustrated in Figure 2, its initial purpose shifted drastically when certain users began using the hashtag in posts and comments to spread anti-Asian beliefs, fuel xenophobia, and blame the Chinese for the virus’s spread (Hong et al., 2023).

According to the findings of Hong et al. (2023), a large proportion of Asian Instagram users reported getting threats, hateful comments, and accusations. In fact, they have stated that Instagram’s community standards have been ineffective in preventing the spread of hate speech and prejudice, as such matters continue to exist (Ziyi, 2020; Hong et al., 2023). One user expresses frustration with Instagram’s content filtering, claiming that certain content that obviously violates the community rules remains on the site, while less harmful content is removed or deleted (Kozyreva et al., 2023). This lack of inconsistency, again, evidently diminishes users’ trust in Instagram’s moderation and regulation efforts.

Figure 2. Anti-Asian Hate Speech During COVID-19 Pandemic (Source: Ziyi, 2020; Instagram, 2020; White; 2020)

These instances, therefore, underline the apparent vagueness and inconsistency of Instagram’s Community Guidelines. Although its policies promise to remove content that contains hate speech or threats (Figure 1) (Instagram, 2023), they have failed to do so successfully. As a result, Instagram’s ability to properly handle and prevent hate speech and discrimination concerns has been called into doubt. While community standards do exist, the issue is: at what cost?

“WSP Vigil for Asian Americans” by Andrew Ratto is licensed under CC BY 2.0.

Conclusion

In our dynamic and ever-changing digital world, the rise of hate speech and discrimination, as exemplified by the COVID-19 situation, is unsurprising. Given the massive user base of Instagram, it becomes clear that the platform faces significant obstacles due to its inconsistent community guidelines. While Instagram (2023) tries to prevent hate speech and discrimination through the use of AI, comment filters, and human review processes (Hong et al., 2023), the constant difficulty is finding the right balance between maintaining open expression and a respectful online forum for its diverse user community. It is important to note that the issues discussed are not exclusive to Instagram, but are also common on other social media platforms such as Facebook, TikTok, and YouTube, which all deal with similar matters.

It is my belief that Instagram needs to strengthen its content moderation practices and truly stick to its community guidelines more effectively, rather than simply displaying them for the sake of “reputation” and “public” image. Of course, one may argue that individuals have a responsibility to prevent the spreading of hate speech or racial prejudice, but it is important to realise that complete eradication of such issues may not be possible. Therefore, stronger and stricter procedures should be applied by Instagram to prevent these problems from happening.

References

ACMA. (2022). What audiences want – Audience expectations for content safeguards. Acma.gov.au. https://www.acma.gov.au/sites/default/files/2022-06/What%20audiences%20want%20-%20Audience%20expectations%20for%20content%20safeguards.pdf

Are, C. (2023). An autoethnography of automated powerlessness: lacking platform affordances in Instagram and TikTok account deletions. Media, Culture, and Society, 45(4), 822–840. https://doi.org/10.1177/01634437221140531

Buschman, F., & Buschman, B. F. (2017). Instagram logo on gradient header. Flickr. https://www.flickr.com/photos/138935140@N06/38004399845

Croucher, S. M., Nguyen, T., & Rahmani, D. (2020). Prejudice toward Asian Americans in the covid-19 pandemic: The effects of social media use in the United States. Frontiers in Communication, 5. https://doi.org/10.3389/fcomm.2020.00039

Djpaulvd. (2020). Dear COVID-19, I hate You! #lifebeforecovid19 #CovidYouKillMyVibe. Instagram. https://www.instagram.com/p/CCOgfwEATDU/?hl=en

Feyissa, S., & Feyissa, B. S. (2020). Instagram. Flickr. https://www.flickr.com/photos/solen-feyissa/50383633422

Gillespie, T. (2018). All Platforms Moderate. In Custodians of the Internet: Platforms, Content Moderation, and the Hidden Decisions That Shape Social Media (pp. 1-23). Yale University Press. https://doi.org/10.12987/9780300235029

Hong, T., Tang, Z., Lu, M., Wang, Y., Wu, J., & Wijaya, D. (2023). Effects of #coronavirus content moderation on misinformation and anti-Asian hate on Instagram. New Media & Society. https://doi.org/10.1177/14614448231187529

HowToBeUnleashed. (2023, June 16). How Does Instagram Moderate Content In 2023? https://youtu.be/DSkZ1mvEMyc?si=WrCDDancX5YvLecV

Instagram. (2023). Instagram Help Centre. Instagram.com. https://help.instagram.com/477434105621119

Jang, S. H., Youm, S., & Yi, Y. J. (2023). Anti-Asian discourse in Quora: Comparison of before and during the COVID-19 pandemic with machine- and deep-learning approaches. Race and Justice, 13(1), 55–79. https://doi.org/10.1177/21533687221134690

Karakoulaki, M. (2021, May 11). Anti-Asian hate and coronaracism grows rapidly on social media and beyond. Media Diversity Institute. https://www.media-diversity.org/anti-asian-hate-and-coronaracism-grows-rapidly-on-social-media-and-beyond/

Kozyreva, A., Herzog, S. M., Lewandowsky, S., Hertwig, R., Lorenz-Spreen, P., Leiser, M., & Reifler, J. (2023). Resolving content moderation dilemmas between free speech and harmful misinformation. Proceedings of the National Academy of Sciences of the United States of America, 120(7). https://doi.org/10.1073/pnas.2210666120

Maddox, J., & Malson, J. (2020). Guidelines without lines, communities without borders: The marketplace of ideas and digital manifest Destiny in social media platform policies. Social Media + Society, 6(2), 205630512092662. https://doi.org/10.1177/2056305120926622

PxHere. (2018). Group of diverse people using smartphones. Pxhere.com. https://pxhere.com/en/photo/1449179

Ratto, A. (2021). WSP vigil for Asian Americans. Wikimedia.org. https://commons.wikimedia.org/wiki/File:WSP_Vigil_for_Asian_Americans_%2851057213561%29.jpg

Roberts, S. (2019). Understanding Commercial Content Moderation. In Behind the Screen: Content Moderation in the Shadows of Social Media (pp. 33–72). New Haven: Yale University Press. https://doi.org/10.12987/9780300245318-003

Ruby, D. (2023, August 7). 77 Instagram statistics 2023 (active users & trends). DemandSage. https://www.demandsage.com/instagram-statistics/

White, A. (2020, May 12). Bryan Adams under fire for ‘racist’ tweet blaming ‘bat eating b*******’ for coronavirus. Independent. https://www.independent.co.uk/arts-entertainment/music/news/bryan-adams-coronavirus-bat-china-racist-vegan-royal-albert-hall-twitter-a9509521.html

Wilding, D., Fray, P., Molitorisz, S. & McKewon, E. (2018). The Impact of Digital Platforms on News and Journalistic Content, University of Technology Sydney, NSW. Accc.gov.au. https://www.accc.gov.au/system/files/ACCC+commissioned+report+-+The+impact+of+digital+platforms+on+news+and+journalistic+content,+Centre+for+Media+Transition+(2).pdf

Ziyi, T. (2020, March 20). Netizens bombard Fala Chen with negative comments after she criticises Donald Trump for using ‘Chinese virus’ label. TODAY. https://www.todayonline.com/8days/sceneandheard/entertainment/netizens-bombard-fala-chen-negative-comments-after-she-criticises

This Article © 2023 by Shalee Boey is licensed under CC BY-NC-ND 4.0