Topic covered: Online harms and content moderation

Introduction

This blog will discuss the spread and severity of online harm by analysing the Social Media Challenge in order to argue the importance of content moderation. This blog claims that while balancing the right to free speech and content regulation. Platforms ensure public safety; user well-being and a reasonable degree of information diversity are the focus at this stage for content regulation of platform management systems.

Moderation is essential

Social media content moderation refers to the process by which platforms need to restrict and remove harmful information, such as hate speech and violent content, from their management systems to protect the public interest and safety (Brown, 2022). Such management can effectively prevent social unrest and incidents that jeopardise personal safety. The following section will discuss online harm and argue the importance of platform management from the perspective of social media challenges.

(“File:Doing the ALS Ice Bucket Challenge (14927191426).jpg” by slgckgc is licensed under CC BY 2.0.)

- Social media challenges

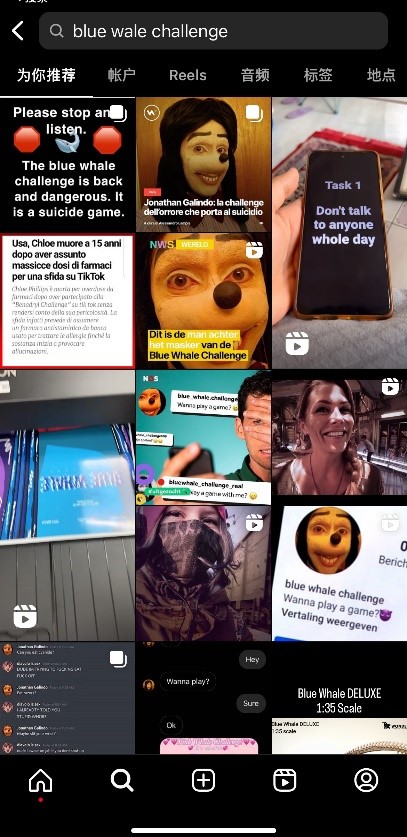

In recent years, with the rapid development of the Internet, social media and online platforms have become essential channels for people to obtain information and communicate. By July 2023, there will be 5.19 billion Internet users worldwide, representing 64.6 per cent of the world’s population (Petrosyan & 22, 2023). Due to their widespread and high impact, several cybersecurity issues have arisen on social media platforms. These include controversial social media challenges, which refer to challenging activities initiated on the web (Zink, 2021). For example, the Ice Bucket Challenge is a campaign to promote awareness of amyotrophic lateral sclerosis (ALS). People pour whole buckets of ice water over their heads in order to let the public understand the disease well and encourage donations for research (Trejos, 2017). Participants usually record videos of their challenges and post them on internet platforms, generating a lot of streaming and buzz. The Blue Whale Challenge- ‘BWC’ is one of the most impressive and horrifying and horrifying.

- Blue Whale Challenge

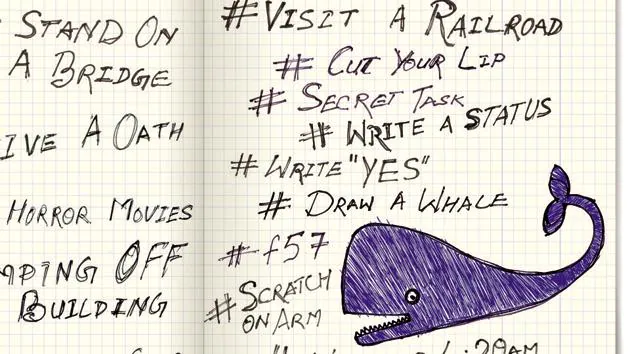

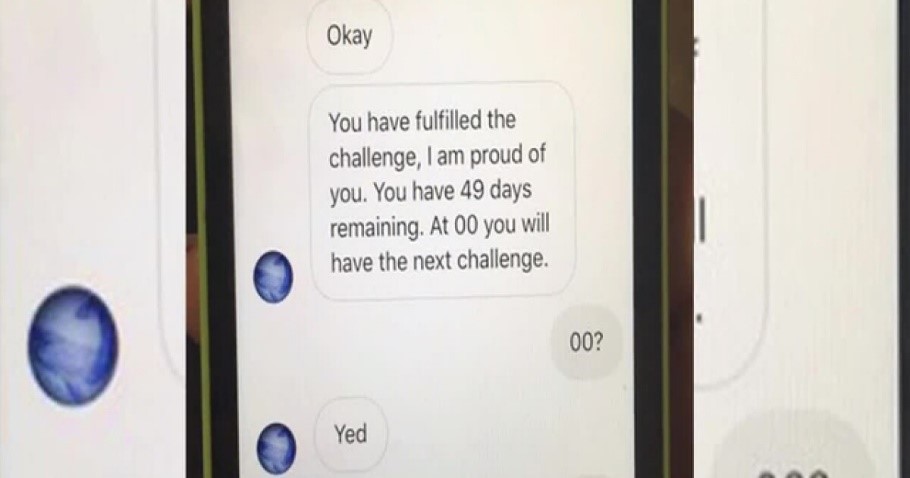

BWC is a Russian-originated cyber death game targeting the online teenage demographic (Upadhyaya & Kozman, 2022). The promoter allows participants to complete 50 tasks in fifty days via the Internet, including self-harm, watching scary movies and waking up at midnight. BWC organises and abets others to commit suicide and self-harm through online platforms. In the process, many participants gradually lose their minds and commit suicide. The “game” spreads through the Internet, from Russia to the rest of the world. While the challenges are gradually heating up, sinister game ideas are slowly attracting psychologically vulnerable teens. And have irreversible effects on many young people and families (Adeane, 2019).

(The above shown tasks are what are commonly believed to be a part of the Blue Whale challenge. © 2017 by Poulomi Banerjee is licensed under CC BY-ND 4.0 )

Here is the link.

- How do YouTube and Reddit moderate BWC content lately?

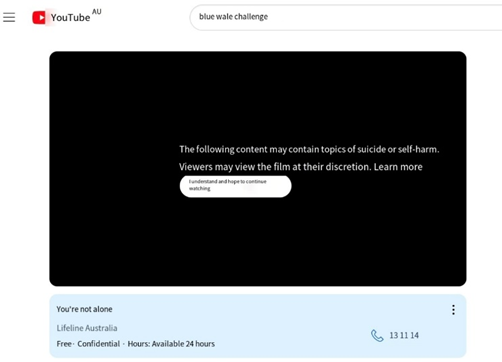

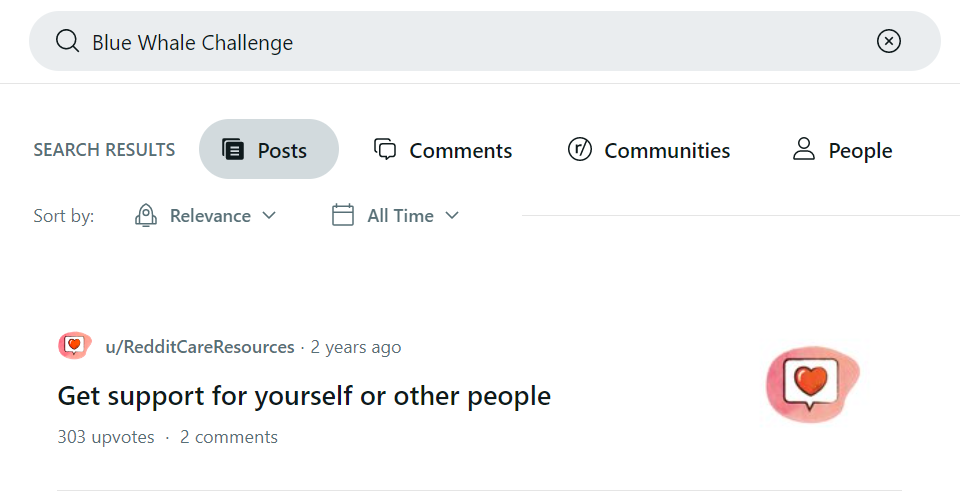

This egregious cyber challenge has caused public panic and raised widespread concerns about cybersecurity and mental health issues. It has also affected the control of the platform management system over the dissemination of information content. To maintain public safety, platform management systems must restrict and remove harmful information, such as hate speech and violent content.

For example, warning messages will pop up when searching for content about BWC on YouTube and Reddit platforms. And the platforms prominently provide 24/7 mental health helplines. Thus, the corresponding platforms serve to control harmful content. By actively managing harmful content, platforms can reduce potential social conflict and criminal behaviour, thereby ensuring the safety and well-being of the public.

(YouTube: Showing mental health support © 2023 is licensed under CC BY-ND 4.0 )

(RedditCareSources © 2023 is licensed under CC BY-ND 4.0 )

Moderation is complex

“Moderation is hard to examine, because it is easy to overlook—and that is intentional.”

— Tarleton Gillespie (Gillespie, 2018)

However, many companies often face serious human rights and ethical issues in response to content moderation. Part of the public believes that content regulation combats free speech and the right to privacy (2021). Mike Godwin talks about self-regulation and freedom of speech: “Give people a modem and a computer and access to the Net, and it is far more likely that they will do good than otherwise. This is because freedom of speech is itself a good … societies in which people can speak freely are better than societies in which they can’t (Godwin, 1998).” If platforms are too strict in controlling content, they may limit the space for social debate and innovation, undermining the diversity of ideas and the emergence of novel perspectives. At the same time, too much restriction may also raise concerns about censorship of speech, thus affecting public trust in the platform(2021). Undeniably, freedom of speech on platforms does increase the diversity of online content and rich discussion. And self-regulation can cut off much inappropriate content before it is posted. However, the youth community is usually the critical target when focusing on harmful cyber challenges such as BWC. This is because young people in their formative years and adolescence are not mature enough to judge right from wrong.

(Teen says she took part in online suicide game Blue Whale Challenge © 2017 by Christa Dubill is licensed under CC BY-ND 4.0 )

The researchers investigated how online platforms such as YouTube portrayed how harmful social media challenges such as BWC are described. They found that the widespread sharing of these behaviours can normalise self-harming behaviours and suicides in adolescents (Park et al., 2023). They are also owing to behavioural contagion theory, which refers to the fact that in a situation where one person has done something, others are more likely to do the same thing (Jones & Jones, 2002). This contagious behaviour is found in gambling, drugs, etc., and applies to the hot internet challenge. Gillespie describes the network as ‘exquisite chaos(Gillespie, 2018).’ In the complicated cyber world, young people who have not yet developed and matured are easily abetted and encouraged by undesirable information on the Internet. Therefore, moderate intervention by the platform is necessary.

(Frequent use of BWC emoticons © 2023 is licensed under CC BY-ND 4.0 )

Over-reliance on platform content control also has the potential to lead to information filtering and bias. Digital platforms are global (Gillespie, 2018). As a public social networking platform, it is available to a wide range of users in terms of age, cultural background, education, mental health, etc. Both the users and the staff of the service platforms are from different countries and have different cultural backgrounds. If only a few platforms decide what is “harmful” content. In that case, their views and positions may have a detrimental effect on users, and this centralised control can potentially diminish the diversity of information and the public’s exposure to different viewpoints. (Gillespie, 2018). Therefore, to avoid infringing on the freedom of expression, platforms can establish transparent and fair rules and mechanisms to ensure fairness and balance in the decision-making process. Platforms should establish a user feedback mechanism and adopt multi-party participation and joint decision-making to safeguard the democratic and broadly representative nature of content management strategies. At the same time, the platform uses appropriate technical means and manual review to ensure that harmful content is effectively filtered and misjudgements are minimised. (Park et al., 2023).

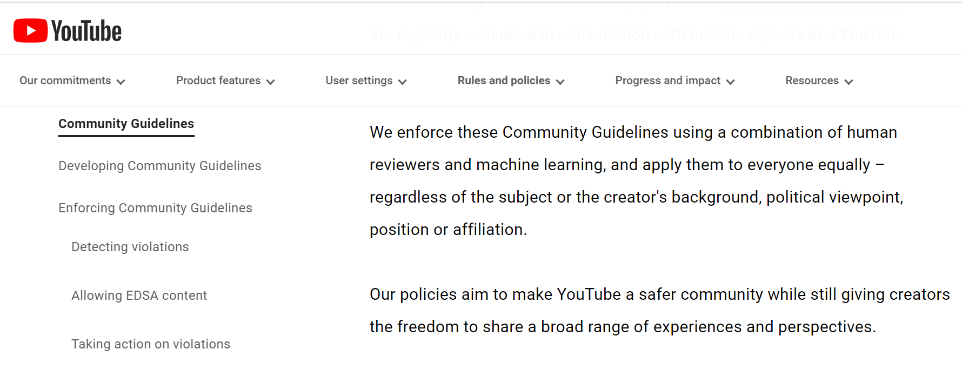

How does YouTube Moderate content now?

For example, YouTube, as a widespread public and open video platform, anyone has the right to upload videos and share them with the world. Most of the time, users can easily find the events and boards they are interested in. Underneath each video is an open comment section for users to speak their minds. YouTube platform portrays itself as open, impartial and noninterventionist (Gillespie, 2018). But this openness makes for both opportunities and challenges. To strike a balance, YouTube controls content in its terms and conditions as follows(2023):

- Remove content that violates our policies as soon as possible.

- Reduce the spread of harmful misinformation and content that violates our policy guidelines.

- Reward trusted and qualified creators and artists.

(YouTube: Community guidelines © 2023 is licensed under CC BY-ND 4.0 )

The particular ways in which platforms implement their strategies have their consequences. Regardless of the specific rules, the important thing is that platforms need to think proactively and develop transparent practices to ensure that their control behaviour is justified. Transparent and fair regulations and mechanisms and appropriate technical and human review methods are necessary to ensure that harmful content is effectively filtered and misclassifications are reduced. In this way, platforms can provide a more friendly and safe online environment based on platform protection, increasing user participation and satisfaction is the platform to carry out accordingly.

Conclusion

That is why I believe that platform regulation must exist. Platforms should put more energy and thought into rationalising the balance between the right to free speech and the degree of content regulation to ensure that public safety, user well-being and diversity of information are achieved. Harmful online activities like the Blue Whale Challenge are increasing and can only be better prevented if they are better understood.

References

Adeane, A. (2019, January 13). Blue Whale: What is the truth behind an online “suicide challenge”? BBC News. https://www.bbc.com/news/blogs-trending-46505722

Baruah, J., & Bureau, E. (2023, June 2). Blue Whale: Blue whale challenge and other “games” of death. The Economic Times. https://economictimes.indiatimes.com/magazines/panache/blue-whale-challenge-and-other-games-of-death/articleshow/60135835.cms?from=mdr

Brown, R. (2022, June 28). What is social media content moderation and how moderation companies use various techniques to… Medium. https://becominghuman.ai/what-is-social-media-content-moderation-and-how-moderation-companies-use-various-techniques-to-a0e38bb81162

Gillespie, T. (2018, June 26). Chapter 1. all platforms moderate. De Gruyter. https://www.degruyter.com/document/doi/10.12987/9780300235029-001/html

Godwin, M. (2003, June 20). Cyber rights: Defending free speech in the Digital age. MIT Press. https://direct.mit.edu/books/book/2846/Cyber-RightsDefending-Free-speech-in-the-Digital

Jones, D. R., & Jones, M. B. (2002, May 24). Behavioral contagion in Sibships. Journal of Psychiatric Research. https://www.sciencedirect.com/science/article/abs/pii/002239569290006A

Park , J., Lediaeva, I., Lopez, M., Godfrey, A., Madathil, K. C., Zinzow, H., & Wisniewski, P. (2023, April 25). How affordances and social norms shape the discussion of harmful social media challenges on Reddit. Human Factors in Healthcare. https://www.sciencedirect.com/science/article/pii/S277250142300009X?via%3Dihub#abs0001

Petrosyan, A., & 22, S. (2023, September 22). Internet and social media users in the world 2023. Statista. https://www.statista.com/statistics/617136/digital-population-worldwide/

Trejos, A. (2017, July 3). Ice bucket challenge: 5 things you should know. USA Today. https://www.usatoday.com/story/news/2017/07/03/ice-bucket-challenge-5-things-you-should-know/448006001/

United Nations. (2021, July 23). Moderating online content: Fighting harm or silencing dissent? https://www.ohchr.org/en/stories/2021/07/moderating-online-content-fighting-harm-or-silencing-dissent

Upadhyaya, M., & Kozman, M. (2022, October 20). The Blue Whale Challenge, social media, self-harm, and suicide contagion. Psychiatrist.com. https://www.psychiatrist.com/pcc/depression/suicide/blue-whale-challenge-social-media-self-harm-suicide-contagion/

Youtube. (2023). YouTube Community Guidelines & Policies – how YouTube works. YouTube. https://www.youtube.com/howyoutubeworks/policies/community-guidelines/

Zink, H. (2021, June 17). Dangerous social media challenges to watch out for. Medium. https://medium.com/safeguarde/dangerous-social-media-challenges-to-watch-out-for-295efec6cea7