Whilst digital giants are somewhat motivated to uphold user satisfaction and corporate image, their exploitation of user data, and opaque self-regulation techniques suggest they’re ultimately driven by commercial interests.

Self-regulation alone is ineffective, and in an age of disinformation, independent regulatory oversight is needed to increase accountability and ensure user’s best interests are upheld in the digital space.

Increasing domestic regulation is one way to help moderate content so it better aligns with the unique social and cultural environment which the platforms operate in.

Which giants, and why?

We will explore the regulation of two platforms: Facebook and X, formerly Twitter. These platforms were chosen due to their recent and regular implications in the media and in legal environments for their lack of sufficient self-regulation and attempts to resist increased oversight.

Moreover, these platforms are extremely influential and ubiquitous in the everyday lives of Australians. Facebook hosts nearly 16 million active users in Australia – meaning that an astonishing 65% of the country’s population are interacting with content on this platform (Correll, 2023). Similarly, X boasts around 6 million active monthly users in Australia, a number which continues to grow, despite a year of tumultuous regulation (Tong, 2022).

65% of Australian’s are active Facebook users

Correll, 2023

With multiple touch points to a substantial portion of the population, these platforms have access to an unparalleled breadth and depth of personal and sensitive data. Their algorithms also hold a powerful role in choosing which views and voices are perpetuated and circulated amongst society, influencing broader democratic outcomes.

Therefore, critically examining the regulatory landscape is essential to ensure that tech giants operate in ways that benefit society, protect users from harm, and uphold responsible and ethical practices in the digital space.

The current state of regulation

Social media giants operate independently, using self-regulation tools like community guidelines to moderate their platforms.

However, as relatively new, fluid and interactive online spaces, they aren’t bound to the same ethical standards or regulation as traditional media. Their opaque processes of content moderation are often “unclear, subjective, and discriminatory”, leading to the proliferation of misleading or harmful content and disinformation (Nurik, 2019, p.2878).

To tackle the overwhelming volume of content circulating across their platforms, digital giants craft content regulation policies to be easily algorithmicised for broad international moderation.

Hence, they rely on “reactive, ad hoc” policy-making approach and use “public shocks” to drive forward changes, whereby platforms are temporarily exposed or scandalised, and users enter “a cycle of indignation and pushback” (Flew et al, 2019, p.35). For example, when X recently announced it was limiting un-verified users to reading 600 posts a day, there was major pushback from users, and X increased the limit soon after.

However, this dissatisfaction rarely lasts, as most users lack the sustained motivation to shield themselves from the dangers of the platform.

Despite nearly half of Australian’s reportedly distrust social media platforms, and 89% are concerned with the spread of fake news, millions of users continue to sign up and use these platforms, blissfully ignorant to the harms.

Moreover, in their haste to gain access to digital realms, users often bypass terms and conditions. Platforms encourage this behaviour, intentionally making conditions lengthy, vague and complex to lower the chance of legal liability, and intentionally design app interfaces which reduce visibility and accessibility (Yerby & Vaughn, 2022).

This is arguably unethical, as it undermines transparency, user privacy, and informed consent, and exploits user indifference. It is also consequential, as a lack of awareness from users further erodes the accountability of tech giants, encouraging a cycle of apathy in addressing regulatory issues.

Domestic watchdogs can help address this, ensuring user rights are advocated for, whether users themselves are aware of them or not.

The core of the problem

But why does it matter? Locating the core of the issue requires a closer examination of the cascading impacts that social media has on broader society.

For example, ahead of The Voice referendum in October this year, the Australian Electoral Commission (AEC) has struggled to contain disinformation and violence on X. The governing body has faced difficulty in getting X to remove tweets that encourage users to falsely register in the electoral roll, and threaten AEC workers, with X claiming the content does not violate their terms of service.

Disinformation like this has wide-reaching implications on the trust citizens place in the electoral process and undermines the calibre of political discourse which directly impacts the functioning of a democracy (Bogle, 2023). It also demonstrates the negligible power that governing bodies currently hold over social media giants, who are extremely powerful corporate lobbyists (Popiel, 2018).

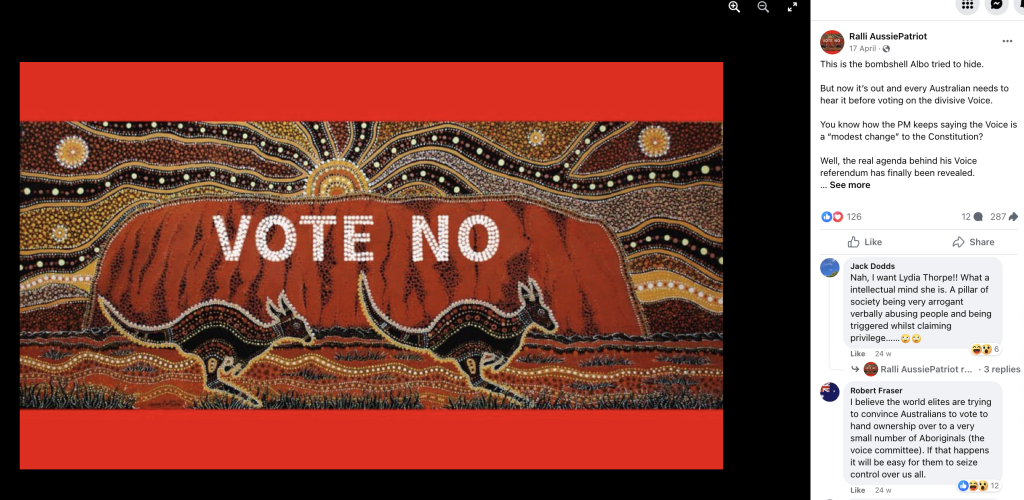

Similarly, on Facebook, there have been problems with regulating accounts using images generated by artificial intelligence to spread anti-Voice messages with “ the veneer of legitimacy” (McIlroy, 2023).

For example, an artwork by Voice supporter and Indigenous artist Danny Eastwood, has been hijacked from its initial “VOTE YES” to display “VOTE NO”. Despite the artist lodging a complaint to Facebook, the image has continued to be shared by far-right channels on the platform, unethically obscuring the original message portrayed by Eastwood.

These examples demonstrate the apathy that comes with self-regulation, as platforms consistently deny accountability.

However, it is also the case that when social media giants have responded to complaints, such as when Facebook took down no-campaign ads posted by the Institute of Public Affairs, they have been accused of “interfering in the democratic process” (Butler, 2023).

This demonstrates the difficulty in striking a balance in regulation; deciding which situations to intervene and how to reconcile competing value systems (Gillespie, 2018).

A difficult solution

In this way, finding solutions to the regulation of global social media platforms is akin to killing a hydra – cut off one problem and four more grow in its place.

Some academics like Flew (2018) have suggested that an increase in domestic regulation could mean giving independent watchdogs like the Australian Communications and Media Authority (ACMA) and the Australian Competition & Consumer Commission (ACCC) more power. This could include the ability to oversee content regulation and complaints and enforce punishments for non-compliance.

The effectiveness of this strategy was shown by the successful implementation of the News Media Bargaining Code last year.

This would also arguably provide a content regulation strategy more closely aligned to Australia’s unique cultural landscape. Independent authorities are better equipped to monitor for disinformation than global media conglomerates like Facebook or X, as they have a more nuanced understanding of local social affairs. This is particularly relevant for political events like The Voice, where impartial oversight from non-politically aligned bodies is vital.

Moving more towards more domestic regulation may also alleviate pressures on global content moderation value chains, which pose severe ethical and social issues (Ahmad & Krzywdzinski, 2022).

On the other hand however, there are concerns that domestic regulation will lead to a dangerous “splinternet” – where different states seek to impose varying legislation based on unique legal frameworks and domestic expectations (Flew et al, 2019).

Some argue this would unfairly limit free speech, dampen creativity and productive collaboration, and threaten information flows between countries and communities (Greengard, 2018). This may increase echo-chambers and further isolate minority groups or those living under heavy censorship.

While I agree to some extent, Australian watchdogs only choose to remove or filter content that is untrue, violent or discriminatory, and thus does not constrain positive collaboration or sharing online. Moreover, I think the ethical imperative to protect users outweighs concerns of a “splinternet”.

With such a large amount of Australians relying on these platforms daily, increased oversight of tech giants is needed to protect users from disinformation, even if individuals themselves are unaware of the dangers, as it’s critical to the health of broader democracy.

All in all, while I agree with Flew et al. (2019) that “no regulatory regime will ever be completely effective” (p.42), this doesn’t mean that Australia shouldn’t try. Independent regulatory bodies should have more authority to neutralise commercial objectives to protect users in this rapidly evolving digital networked society.

References

Ahmad, S., & Krzywdzinski, M. (2022). Moderating in obscurity: how Indian content moderators work in global content moderation value chains. In Digital work in the planetary market (pp. 77-95). Cambridge, MA, Ottawa: The MIT Press, International Development Research Centre. https://doi.org/10.7551/mitpress/13835.003.0008

Banks, A. (2022, March 22). 89% of Australians are worried about the spread of fake news. Mumbrella. https://mumbrella.com.au/89-of-australians-are-worried-about-the-spread-of-fake-news-729756

Bogle, A. (2023, September 21). AEC struggles to get Twitter to remove posts that ‘incite violence’ and spread ‘disinformation’ ahead of voice. Guardian Australia. https://www.theguardian.com/australia-news/2023/sep/21/aec-twitter-x-struggles-posts-violence-disinformation-removal

Butler, J. (2023, March 2). Voice referendum no campaign accuses Facebook of ‘restricting democracy’ over ad removal. Guardian Australia .https://www.theguardian.com/australia-news/2023/mar/02/voice-referendum-no-campaign-accuses-facebook-of-restricting-democracy-over-ad-removal

Correll, D. (2023, July 1). Social Media Statistics Australia – June 2023. Social Media News. https://www.socialmedianews.com.au/social-media-statistics-australia-june-2023/

Flew, T. (2018). Platforms on trial. Intermedia, 46(2), 24-29. https://www.iicom.org/intermedia/intermedia-july-2018/platforms-on-trial/

Flew, T., Martin, F. & Suzor, N. (2019). Internet regulation as media policy: Rethinking the question of digital communication platform governance. Journal of Digital Media & Policy, 10(1), 33–50. https://doi.org/10.1386/jdmp.10.1.33_1

McIlroy, T. (2023, July 28). How online disinformation is hijacking the Voice. The Australian Financial Review. https://www.afr.com/politics/federal/how-online-disinformation-is-hijacking-the-voice-20230721-p5dq7p

Nurik, C. (2019). “Men are scum”: Self-regulation, hate speech, and gender-based censorship on Facebook. International Journal of Communication, 13, 21. https://ijoc.org/index.php/ijoc/article/view/9608/2697

Popiel, P. (2018). The Tech Lobby: Tracing the Contours of New Media Elite Lobbying Power. Communication, Culture and Critique, 11(4), 566–585. https://doi.org/10.1093/ccc/tcy027

Ralli AussiePatriot. (2023, April 17). This is the bombshell Albo is trying to hide. But now it’s out and every Australian needs to hear it before voting on the divisive Voice.. [Image attached] [Image Post]. Facebook.https://www.facebook.com/photo.php?fbid=6355841044467761&set=pb.100001257855224.-2207520000&type=3

Roy Morgan. (2019, July 19). ABC still most trusted | Facebook improves. Roy Morgan. https://www.roymorgan.com/findings/abc-still-most-trusted-facebook-improves

Yerby, J., & Vaughn, I. (2022). Deliberately confusing language in terms of service and privacy policy agreements. Issues in Information Systems, 23(2). https://doi.org/10.48009/2_iis_2022_112

//

This work is licensed under a Creative Commons Attribution-NonCommercial 4.0 International License.