There have been rising concerns of internet regulations, due to more false information, deceitful news and even criminal activity on the internet affecting our daily lives. Internet platforms has replaced traditional news media, become an essential part of life for people to gain information of the world. There is nearly no barrier of entry to post on the internet, everyone can share their thoughts and knowledge. However, this model can be exploited for unethical and unlawful behaviour which raise concerns. Social media platforms have accumulated an enormous user base around the world and has become the focus of this concern. Facebook is one of the most used social media platforms worldwide with a longer history starting at 2004, with around 3 billion monthly users in 2023. There have been several scandals surrounding Facebook, which raises global concerns on platform regulations. Using Facebook as an example, this paper will discuss the reasons internet platforms needed to be regulated. Firstly, I would discuss inequality and discrimination on online platforms, where certain groups have faced unfair treatments or exclusion due to algorithmic bias and hate speech. Subsequently, platforms lack accountability on self-regulation. As the arguably largest social media platform, Facebook has several scandals that heavily concerns the public of an even worse scandal hidden in their database.

Inequality and Discrimination

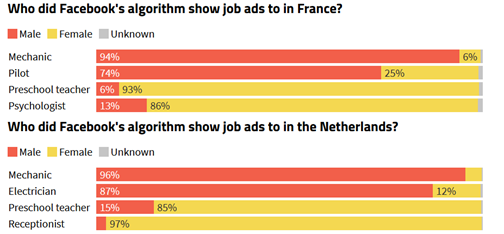

Since the usage of online platforms has increased, inequality and discrimination has been a problem. Different platforms have different policies towards this issue, Facebook has policies on listings and commerce Messenger threads that ‘must not wrongfully discriminate or suggest a preference for or against people because of a personal characteristic.’ Tags like ‘only Christians allowed’, ‘under the age of 35’ are not allowed to appear on their advertisements. (Meta discrimiantion policy , n.d.) Even though these policies exist, there are algorithmic biases on the ads. According to a research article, they find that job ads on Facebook targets specific gender even though these jobs are gender neutral. For example, mechanic and pilots job ads are highly likely to pop up for male users, and teacher and receptionist ads are more likely to pop up for female users. (Global Witness , 2023) According to a paper published by a group of researchers, they found that Facebook’s algorithm delivers ads according to who is pictured in the ad. Pictures of Black person are more likely to be delivered to Black users, and pictures of young women are more likely to be delivered to old men. (Levi Kaplan, 2022) One of the reasons it occurs is because certain population are underrepresented when data was used to build an algorithm, which creates systemic disadvantage for them. (Friis S, 2023) The algorithm is built to target specific groups when it deploys ads, however it should not reinforce sexual discrimination and narrow opportunities for users. Algorithmic biases ‘perpetuate and even amplify existing inequalities, leading to discrimination against marginalized groups… It is crucial to identify and mitigate bias in AI to ensure that these systems are fair, equitable, and serve the needs of all users.’ (Ferrara, 2023, p. 4) Algorithms are more than a commercial tool for business gain but an eco-system that is already inseparable to the society’s function, and therefore should be regulated to limited inequality and discrimination.

Hate speech

Other than inequality and discrimination that is brought by algorithms, hate speech has been another major concern on platforms. Platforms have been criticized for struggling to reduce online hate speech, harassment or even the promotion of terrorism. (Flew T, 2019) According to the United Nations (UN), social media has a significant impact on the 2017 Rohingya genocide in Myanmar’s Rakhine state. Facebook has been used as a ‘useful instrument’ for spreading hate speech, where it is ‘fueling the normalization of extreme violence’ in Ethiopia. Where Kenya’s High Court has filed a $1.6 billion lawsuit against Meta of amplifying hate speech and incitement to violence. (Crystal C, 2023) This shows that social media could be very influential to the public, where self-regulation of these platforms is failing to protect people from horrible events.

Accountability

The biggest concern of the public on platforms is accountability, where is public is more aware that regulations are needed before further damage could be dealt to society. As the leading social media platform, Facebook has many scandals that are very alarming to its users. Some of the most outrages scandals includes the 2016 US elections and Cambridge Analytica scandal, which shows Facebook’s involvement in the spread of ‘fakes news’ and ‘manipulation of electoral politics; privacy breaches and data misuse; and abuse of market power.’ (Flew T, 2019) In the Cambridge Analytica scandal, Cambridge Analytic collected personal data of tens of millions of Facebook users for poltical advertising, casuing the largest data leakage in Facebook history. Facebook owner Meta pays $725 million to resovle a class-action lawsuit on this issue. (Raymond, N., 2022) This lawsuti is a reactive punishement to Facebook, and the damage has been done. Proactive regulations and approach should be taken to aviod such situations happen in the future, where platforms control the content without our consent. ‘Continued self-regulation is not the right answer when it comes to dealing with the abuses we have seen on Facebook.’ (Sen. Lindsey Graham [R-GA], after Facebook CEO Mark Zuckerberg’s appearance before the US Senate, 10 April 2018 cited in Flew T, 2019) Platforms like Facebook has data across the world, we need to have regulations to protect our data and make them responsible for future incidents, so profit oriented platforms are more cautious with fact checking, data protections, etc.

Conclusion

To conclude, platforms have a lot of responsibility because they have lots of personal data. There have been many incidents where platforms have leaked data for deceitful purposes without users’ consent. Such as the US election and Cambridge Analytic scandal. Algorithmic biases and hate speech are still an occurring issue where it causes inequality and discrimination through the internet. As profit-oriented businesses, these platforms lack accountability to solve the problem. We should take a more proactive stance and have more regulations, so we can prevent future incidents from happening.

Reference

Crystal C, (2023) Facebook, Telegram, and the Ongoing Struggle Against Online Hate Speech. Carnegie, Endowment for international peace. https://carnegieendowment.org/2023/09/07/facebook-telegram-and-ongoing-struggle-against-online-hate-speech-pub-90468

Friis, S & Riley, J. (2023) Eliminating Algorithmic Bias Is Just the Beginning of Equitable AI. In AI and Machine Learning. Harvard Business Review. https://hbr.org/2023/09/eliminating-algorithmic-bias-is-just-the-beginning-of-equitable-ai

Ferrara, E. (2023). Fairness And Bias in Artificial Intelligence: A Brief Survey of Sources, Impacts, And Mitigation Strategies.

Flew, T., Martin, F., and Suzor, N. (2019), ‘Internet regulation as media policy: Rethinking the question of digital communication platform governance. ’, Journal of Digital Media & Policy, 10:1, pp. 33–50, doi: 10.1386/jdmp.10.1.33_1

Global Witness. (2023, 6 12). Retrieved from https://www.globalwitness.org/en/campaigns/digital-threats/new-evidence-of-facebooks-sexist-algorithm/

Levi Kaplan, N. G. (2022). Measurement and analysis of implied identity in ad delivery optimization. Proceedings of the 22nd ACM Internet Measurement Conference (IMC ’22).

Meta discrimiantion policy. (n.d.). Retrieved from https://www.facebook.com/policies_center/commerce/discrimination

Raymond, N. (2022) Facebook parent Meta to settle Cambridge Analytica scandal case for $725 million. Reuters. https://www.reuters.com/legal/facebook-parent-meta-pay-725-mln-settle-lawsuit-relating-cambridge-analytica-2022-12-23/