Content moderation in digital platforms is an ongoing and evolving phenomenon that manifests itself in the active monitoring and management of user-generated content on various online platforms. Content moderation is carried out by removing problematic content or labelling it with warnings, or by allowing users to block and filter content on their own (Jennifer Grygiel, 2019). Thus, it plays a crucial role in maintaining a safe and respectful online environment. Content moderation is now occurring in many digital media platforms, which I will describe below.

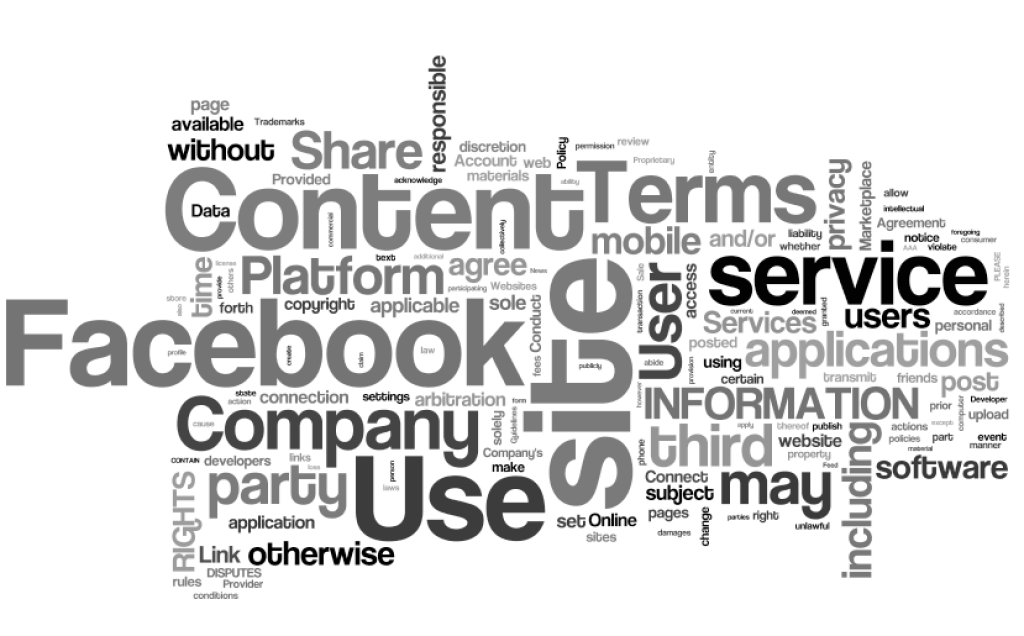

Terms – Facebook by Auntie P is licensed under CC BY-NC-SA 2.0.

In Facebook, users have appeared to find ways to circumvent the content review policy. Many users describe the circumvention behaviour as a technical challenge rather than a policy violation. This phenomenon therefore suggests that in the absence of a strong compliance culture, the content moderation deterrence model employed by Facebook may be ineffective (Gillett, R. et al, 2023). Platforms should cultivate users’ perceptions of online social compliance to create safer and more inclusive digital environments.

“facebook” on Facebook offices on University Avenue by pshab is licensed under CC BY-NC 2.0

A harmonious online community environment should also start with securing accounts and reducing the risk of social media accounts being hacked. Based on the experiences shared by the victims of Facebook hijacking, it helps other users to identify scams, manage the private content of their online accounts, and reduce the number of scams caused by privacy breaches.

Facebook ‘friends’ hijacked in scam by Simon Willison is licensed under CC BY-NC 2.0.

Some cases about the real world examples – importance of moderation, lack of governance cause the privacy problems and risks online.

References:

Grygiel, J., & Brown, N. (2019). Are social media companies motivated to be good corporate citizens? Examination of the connection between corporate social responsibility and social media safety. Telecommunications Policy, 43(5), 445-460. https://doi.org/10.1016/j.telpol.2018.12.003

Gillett, R., Gray, J. E., & Valdovinos Kaye, D. B. (2023). “Just a little hack”: Investigating cultures of content moderation circumvention by Facebook users. New Media & Society, 146144482211476-. https://doi.org/10.1177/14614448221147661

sserrano@tahlequahdailypress.com, S. S. |. (2023, May 26). Facebook hijack victim shares experience to help other users recognize scam. Tahlequah Daily Press. https://www.tahlequahdailypress.com/news/facebook-hijack-victim-shares-experience-to-help-other-users-recognize-scam/article_2e8b0170-fb49-11ed-a3f1-8b9b5c7846a1.html