Ethical Implications Of Artificial Intelligence In The Digital Future Of The Internet by Lillian Neylon is marked with CC0 1.0 Universal

Thesis statement:

The ethical implications of artificial intelligence in the digital future of the internet are at the forefront of contemporary concerns, as the widespread adoption of AI technology raises questions about privacy, bias, accountability, and the overall societal impact.

So, Let’s start.

In today’s digitally interconnected world, artificial intelligence (AI) has emerged as a transformative force, reshaping how we interact with the internet. AI-driven algorithms power our social media feeds, recommend products, and inform decision-making processes across various domains. While the integration of AI into our digital lives brings about undeniable benefits, it also raises profound ethical questions.

This blog post sets out to explore the ethical dimensions of AI’s burgeoning role in shaping the digital future. As we embrace the convenience and efficiency offered by AI-driven technologies, we find ourselves confronted with concerns regarding privacy, fairness, accountability, and the broader societal consequences. These issues are not just theoretical but are increasingly affecting our everyday experiences online.

Over the course of this post, we will dissect these ethical challenges. By gaining a deeper understanding of AI’s ethical implications, we can collectively work towards a digital future that reflects our values and aspirations while harnessing the incredible potential that AI offers.

Let’s Just Say I’m Concerned!

The Rise of AI in The Digital Future

While the adoption of AI in our digital future holds promise and potential, it also harbours concerns that extend beyond the immediate horizon. If we delve deeper into the extensive research article; ‘Evolution of Artificial Intelligence Research in Technological Forecasting and Social Change: Research Topics, trends, and future directions’ by Dwivedi et al. (2023), it becomes evident that AI’s rapid evolution requires us to carefully consider its long-term implications. The article offers a concise overview of the evolution and multidisciplinary nature of artificial intelligence (AI) research, underlining its societal and economic significance. While it introduces the idea of an integrative systematic review to address gaps in understanding, it does not delve into specific research findings or methodologies. (Dwivedi et al. 2023) The rise of AI is happening now, and society must be able to understand the enhancements and disadvantages this brings to society, which some articles are missing.

So, What Are Our Privacy Concerns?

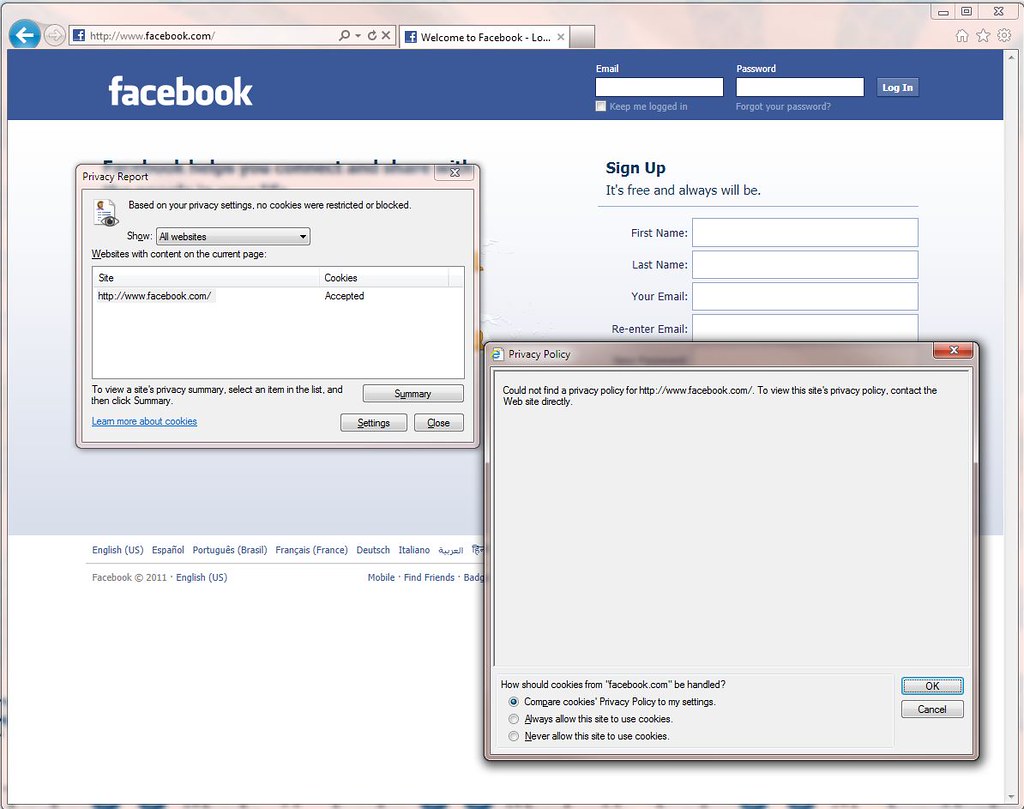

This section delves into the paramount issue of privacy concerns within AI technologies, with their ability to collect, analyse and manipulate vast amounts of data, it doesn’t look good. However, the article ‘Privacy Issues of AI’ by C. Bartneck and co-authors (2020) offers valuable insights into this many-layered problem. It evaluated the importance of AI and how it can compromise users’ privacy through its capacity to gather personal information from not only online behaviour to sensitive health data but even predict individual preferences with scary accuracy. For instance, AI-driven programmatic advertisements rely on users’ private data to target them with personalized content, found through their online activity. The article then elaborated on the potential misuse of this personal data, touching upon concerns like political manipulation and the rise of deepfake technology on social media. (C. Bartneck et al. 2020) These developments emphasise the critical need for a strong discourse on the boundaries of privacy in our new data-driven world.

So, I’m concerned about Privacy, but what about Bias and Fairness in AI Technologies?

Within section 3, we delve deeper into the pressing concerns of bias and fairness within AI algorithms and systems, an issue that stands at the front of contemporary AI discussions. With AI and billionaires promising to revolutionize our lives with this helpful algorithm, society forgets about the bias that may be lurking underneath these algorithms. As the article ‘Diversity and Bias in AI: Navigating the Complex Landscape’; by Stephanie Alvarez (2023) illuminates, AI systems, often trained on historical data reflecting societal prejudices, can inadvertently adopt these biases and mannerisms found in history. This perpetuation of bias not only hampers the core ethical principles of fairness and equality but can also deepen existing social inequalities. (Alvarez, 2023) For instance, biased AI in hiring processes can disadvantage certain groups, leading to employment disparities. Moreover, the article presents compelling examples of AI bias in areas such as criminal justice, where predictive algorithms have been shown to target minorities and marginalised communities. Emphasising the importance of addressing bias in the upcoming AI as we navigate its integration into our digital culture.

But who is accountable for this?

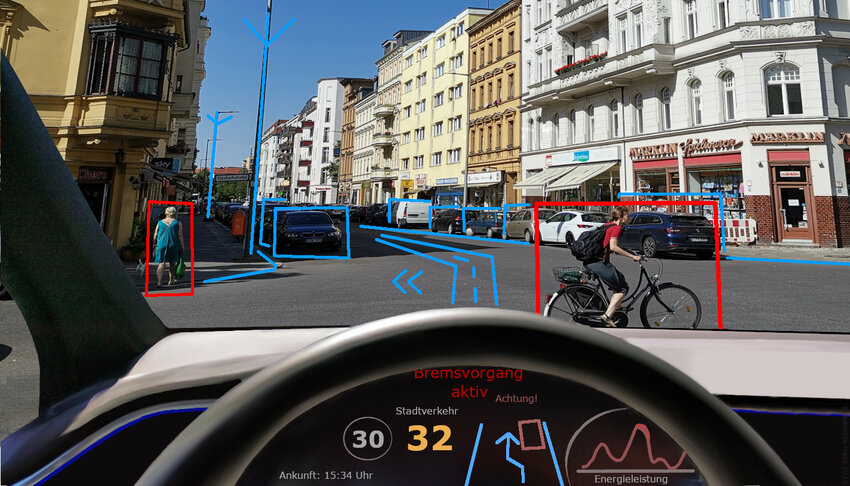

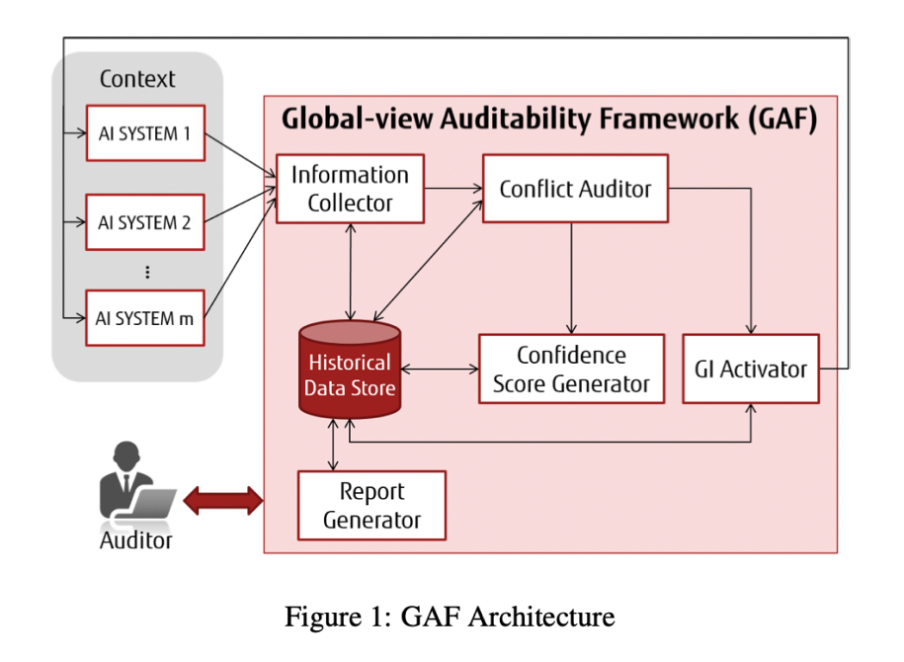

The intricate and difficult task behind AI in modern society is assigning accountability and responsibility to an algorithm when it makes a mistake. An article I read recently called; ‘Putting Accountability of AI Systems into Practice’ by B. Miguel and co-authors (2021) introduced a ground-breaking concept called the Global Accountability Framework (GAF), which is designed to manage AI systems in domains such as the automotive and motor insurance industries. However, this innovative framework raises crucial questions about accountability and ethics within AI overall These systems relay data about their perceptions to the GAF, a central player in conflict resolution and rectifying disparities among AI systems. (B. Miguel et al. 2021) Herein lies the fundamental ethical question: when AI systems mess up, who should bear the responsibility? The GAF serves to facilitate decision-making processes, offering vital traceability and an inspection of AI systems’ actions. However, it refrains from making ultimate decisions regarding responsibility, underscoring the importance of human involvement in addressing AI-related errors.

AI developers and operators are entrusted with the responsibility of creating, maintaining and supervising AI systems to ensure their ethics and accuracy. However, as AI becomes increasingly autonomous, the assignment of blame and liability becomes more intricate. The accountability of AIA systems pivots on transparency, reliability, and ethical adherence, necessitating ongoing human interaction and intervention.

But Are There Counter Arguments to This?

In response to the numerous ethical concerns surrounding AI systems, there are many common counter-arguments worth considering before making your decision to use AI. One counter-argument contents that AI systems are devoid of personal biases and emotions, making them more objective than humans. The article: ‘Human- versus Artificial Intelligence’ by J.E. Korteling and co-authors (2021) argue that by relying on algorithms and data, AI can help eliminate the biases that often plague human decision-making processes. However, this viewpoint oversimplifies the issue. AI systems are trained on historical data that may contain inherited biases, leading to algorithmic bias and perpetuating social inequalities. (J.E. Korteling et al. 2021) Research, such as the study conducted by Obermeyer et al. in 2019, has revealed that AI algorithms used in healthcare can exhibit racial bias, resulting in unequal access to medical care. Therefore, while AI may lack personal biases, it can inadvertently inherit societal prejudices.

Another counter-argument puts forward that the benefit of AI technology, such as increasing efficiency and productivity, outweighs the ethical concerns. The article: ‘The Ethical Implications of Artificial Intelligence (AI) Fore Meaningful Work’ by S. Bankins and P. Formosa (2023) contends that AI has the potential to revolutionize various industries. While AI undeniably offers numerous benefits, it is essential to recognise that ethical considerations are not mutually exclusive. Ethical guidelines and responsible AI development can coexist with technological advancements. In conclusion, while counter-arguments may highlight the potential benefits of AI and the role of developers, they do not diminish the significance of ethical concerns. Evidence and reasoning support the thesis that ethical considerations are integral to the responsible development and deployment of AI systems.

IN CONCLUSION………

In the era of rapidly advancing AI technologies, ethical considerations are undeniably central to the responsible integration of AI systems into our lives. As explored in this blog post, issues related to privacy, bias, accountability, and transparency demand our attention and vigilance. While counter-arguments emphasise the potential advantages of AI and the role of developers, ethical concerns remain paramount. Striking a balance between technological progress and ethical responsibility is imperative. By doing so, we can harness the transformative power of AI while ensuring that it aligns with our values and respects fundamental human rights.

Bibliography

Bankins, S., Formosa, P. (2023). The Ethical Implications of Artificial Intelligence (AI) For Meaningful Work. J Bus Ethics 185, 725–740 https://doi.org/10.1007/s10551-023-05339-7

Dwivedi, Y. K., Sharma, A., Rana, N. P., Giannakis, M., Goel, P., Dutot, V., (2023). Evolution of artificial intelligence research in Technological Forecasting and Social Change: Research topics, trends, and future directions. Technology Forecasting and Social Change. 192. 122579. ISSN 0040-1625. https://doi.org/10.1016/j.techfore.2023.122579

Bartneck, C., Lütge, C., Wagner, A., Welsh, S. (2021). Privacy Issues of AI. In: An Introduction to Ethics in Robotics and AI. SpringerBriefs in Ethics. Springer, Cham. https://doi.org/10.1007/978-3-030-51110-4_8

Alvarez, C. S., (2023, September 23). Diversity and Bias in AI: Navigating the Complex Landscape. Medium. https://medium.com/@globalstephaniechavez/diversity-and-bias-in-ai-navigating-the-complex-landscape-9dd8e7d98b28

Miguel, B.S., Naseer, A., Inakoshi, H. (2021). Putting Accountability of AI Systems into Practice. 5278. Proceedings of the Twenty-Ninth International Joint Conference on Artificial Intelligence (IJCAI-20). https://www.ijcai.org/proceedings/2020/0768.pdf

Korteling, E. J., Van De Boer- Visschedijk, C. G., Blankendaal, M. A. R., Boonekamp, C. R., Eikelboom R. A. (2021). Human- Versus Artificial Intelligence. Front. Artif. Intell. 4:622364. https://doi.org/10.3389/frai.2021.622364

Be the first to comment on "Ethical Implications Of Artificial Intelligence In The Digital Future Of The Internet "