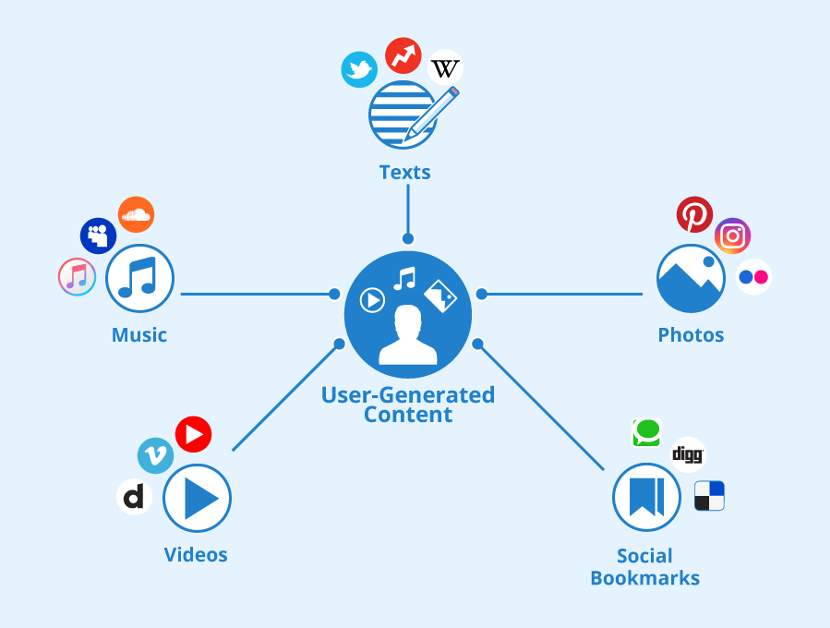

“User-Generated Content” by Seobility is licensed under CC BY-SA 4.0

The rapid growth of mobile electronic devices and the Internet has transformed the daily lives of most people. Mobile devices and online technology can entertain, communicate, and learn, but they also come with significant risks. Cyberbullying, contact with strangers, sexual messages, and pornography are some of the risks that come along with being present in people’s lives (Livingstone & Smith, 2014).

The Change from Web 1.0 to Web 2.0 to Web 3.0 Has Given Internet Users More Autonomy and Participation

Web 3.0 has strengthened users’ rights to own and control their own data. Various social media platforms were born out of this highly liberated environment of participation, expression, and social connection. People can utilize social media to reach out to each other more directly on the internet, gaining new opportunities for wider communication and interaction (Gillespie, 2018). While this highly free internet may seem like a utopia on the surface, dangers are creeping in. Pornography, violence, misunderstandings, and illegal, abusive, and hateful content are gradually appearing on top of various platforms (Gillespie, 2018).

“Message from artificial intelligence..” by Michael Cordedda is licensed under CC BY 2.0.

Cyberbullying

There is a lot of positive content sharing that occurs, but there is also a lot of negative content that occurs on the internet. Many unscrupulous individuals will take advantage of the freedom of content creation on the Internet to commit crimes. The growth of social media and technology has made cyberbullying a major cause of making life miserable for teens. Here’s a real-world example. Megan has suffered from severe depression since she was eight years old. She takes antipsychotics and antidepressants regularly to fight depression the hard way (Ingham, 2023). “He” would mock Meghan in private. “He” leaked Meghan’s personal information to the Internet and publicly declared that the world would be a better place without her. Megan, who was already prone to depression, believed it. Megan ended her life in her bedroom after hearing that the fake “boy” was the kind of boy girls would kill themselves for. She was only 13 years old (Ingham, 2023).

Why is Content Moderation Important?

As more and more negative content can have a serious impact on users and the community, content audits play a big role in managing the room of such content. Content censorship is an organized practice used to screen user-generated content (UGC) posted to Internet sites, social media, and other online flotsam. Content censorship determines whether such content is appropriate for a given website, region, or jurisdiction (Roberts, 2017).

Platforms Self-Regulation of Content Moderation Can Have Some Positive Effects

Platforms act as norm-setters, interpreters of the law, arbiters of taste, adjudicators of disputes, and enforcers of any rules they choose to establish in content moderation (Gillespie, 2018). Content moderation by platforms can prevent the spread of harmful content to protect the safety of users. Platforms can remove abusive and argumentative comments to ensure a positive online social environment. Platform content moderation also prevents the platform from being used for illegal activities, such as online fraud, false advertising, and the distribution of pornographic content. It also prevents the misuse of users’ personal information and reduces the risk of malicious data collection and privacy violations.

“The Terror of War but more commonly” by manhhai is licensed under CC BY 2.0 DEED

Platforms Welcome Some Challenges in Self-regulation

But because of the diversity of the world, platforms don’t have a clear line for content regulation. So content regulation by platforms has ushered in some challenges. The photograph “The Horrors of War” is a photograph by Associated Press photographer Nick Ut. It was the Pulitzer Prize-winning photograph of 1972. The photo was included in an article published in September 2016 by Norwegian journalist Tom Egeland. But Facebook administrators deleted Egeland’s post because the photo included overly painful images of war and nudity of minors (Gillespie, 2018). Media and citizens across the globe have been judging Facebook’s decision. Some people think Facebook should not have deleted the post. This photo reminds them of the effects of war. Facebook’s vice president, Justin Osofsky, explains how certain images may be offensive in one part of the world and acceptable in another (Gillespie, 2018). Due to the different backgrounds of cultures and citizens around the globe, they think differently. There is no clear line between these images. It is difficult to sift through millions of posts each week based on specifics. Platforms don’t know how they should set content redlines for content moderation. Many users feel that their freedom of expression is exploited by platforms. Platforms collect too much personal privacy when they conduct content audits leading to privacy leaks to some third-party companies for profit.

Should Platforms Work With Governments to Do Content Moderation?

Government involvement in content regulation can increase transparency and fairness. It prevents abusive behavior and improper censorship by some platforms and third parties. Google and Facebook are unavoidable partners for business cooperation. This is because the media can get the content desired by the audience and secure advertising revenue through the databases of Google and facebook (Australian government, 2019). The content that internet users search for on search engines may be advertisements paid for by these giant companies and not real content. Internet users are controlled by the “filter bubbles” created by these platforms and third-party companies to control the content they receive. Also, the privacy of the web users is compromised. The government has enacted the Privacy Act and the Australian Consumer Law to require major media platforms to be more transparent about data sharing. These laws include specific rules on stopping the use or disclosure of personal information, including the protection of personal information of children and vulnerable groups. The Australian Broadcasting Authority (ABA) is the main government agency responsible for regulating Internet content in Australia, and the Broadcasting Services Act 1992 gives them the power to investigate complaints about Internet content, develop codes of conduct for the Internet industry, register and monitor adherence (Australian Human Rights Commission, 2002). It can provide advice and information to the community on Internet safety issues, particularly in relation to children’s use of the Internet. The content posted on the various website pages is regulated by the ABA, which can even regulate and censor audio as well as lyrics posted on the Internet (Australian Human Rights Commission, 2002). These are all stored content that will be subject to the program. Government collaboration with digital platforms on content management can be effective in preventing user data leakage and combating the spread of terrorism, extremism, and criminal activity.

There Are Also Some Negative Aspects of The Platform’s Cooperation With Governments

Government intervention in content regulation can also have some negative consequences. Government intervention in content censorship may limit users’ freedom of expression. Because these users may be afraid to express their true opinions for fear that their content will be censored and deleted. Governments may abuse their censorship power to remove content that is politically antagonistic. They may hide the bad things they have done. Some media reports that want to expose the government for doing bad things will be forced to be deleted by the government, or the government will just publish fake news to cover up the bad things they have done.

Conclusion

In conclusion, platforms need to work with governments on content management. Platforms and governments can work together to monitor each other. However, the rights of the platform and the government need to be balanced. The government can ensure that the platforms are compliant, and the platforms can monitor that the government is not abusing its power. But the cooperation also needs to ensure that citizens’ freedom of expression is not restricted. Transparency in content auditing and the credibility of information should also be ensured.

Reference:

Australia government. (2019, December 12). Government Response and Implementation Roadmap for the Digital Platforms Inquiry. https://treasury.gov.au/publication/p2019-41708

Australian Human Rights Commission. (2002). Internet Regulation in Australia. https://humanrights.gov.au/our-work/publications/internet-regulation-australia

Calibraint. (2023). Web 1.0 Vs. Web 2.0 Vs. Web 3.0: What’s The Difference? https://www.youtube.com/watch?v=GXJp9mJR2Q8

Gillespie, T. (2018). All Platforms Moderate. In: Custodians of the Internet : Platforms, Content Moderation, and the Hidden Decisions That Shape Social Media. Custodians of the Internet : Platforms, Content Moderation, and the Hidden Decisions That Shape Social Media (pp.1-23). Yale University Press. https://web-s-ebscohost-com.ezproxy.library.sydney.edu.au/ehost/ebookviewer/ebook/bmxlYmtfXzE4MzQ0MDFfX0FO0?sid=72ac1a58-d781-4375-a01f-2137eec6e550@redis&vid=0&format=EB&lpid=lp_Cover-2&rid=0

Ingham, A. (2023). 7 Real Life Cyberbullying Horror Stories. Family Orbit. https://www.familyorbit.com/blog/real-life-cyberbullying-horror-stories/

Imagga. (2021). Why Is Content Moderation Important? https://www.youtube.com/watch?v=-SHFncU4h18

Kenton, W. (2023). What Is Web 2.0? Definition, Impact, and Examples. https://www.investopedia.com/terms/w/web-20.asp

Livingstone, S., & Smith, P. K. (2014). Annual research review: Harms experienced by child users of online and mobile technologies: The nature, prevalence and management of sexual and aggressive risks in the digital age. Journal of child psychology and psychiatry, 55(6), 635-654. https://doi.org/10.1111/jcpp.12197

Roberts, S. T. (2017). Content moderation. E Scholarship. Retrieved October 6, 2023, from chrome-extension://fcejkolobdcfbhhakbhajcflakmnhaff/pages/viewer.html?file=https%3A%2F%2Fescholarship.org%2Fcontent%2Fqt7371c1hf%2Fqt7371c1hf.pdf#pagemode=thumbs