Content moderation on social media refers to the processes that companies undertake to define controversial content and keep their platform clear from it; Gerrard (2018) describes it as how social media companies will ‘make important decisions about what counts as “problematic” content and how they will remove it’. There are numerous reasons behind moderation; with more problematic content available, the reputation of the platform will be lowered and steer away new or existing users. Despite encouragement of free speech, some more extreme expressions could reduce advertisers’ interest in promoting on the platform; there are also government policies over certain content that platforms are required to follow (West, 2018), contrasting the original idea of the internet as a place free of governance and rules. Possibly the most important motive behind regulating content is to prevent harm to others, which may be caused through copying dangerous activities shown in media, or garnering harmful behaviours due to encouragement online. Through this essay, I argue the case for more explicit and stronger moderation and regulations, focusing on content with a high likelihood of causing harm to others online.

Current Moderations of Common Social Media Companies

According to Langlois et al. (2009), ‘[Web 2.0 companies] transform public discussion and regulate the coming into being of a public by imposing specific conditions, possibilities, and limitations of online use.’ These regulations can be found on the official websites of each platform, often under the term ‘community guidelines’; looking at the most used non-messaging social media apps in the current year (Wong, 2023), Facebook, YouTube, Instagram and TikTok, many of their rules share similar interests but remain vague.

Focusing on guidelines restricting the promotion of harmful activities, YouTube and TikTok both have lengthy descriptions about minor safety, including points such as banning dangerous acts involving, reproducible by, or directed towards minors, sexualisation, exposure to mature themes and bullying/cyberbullying. Meta, the company presiding over Facebook and Instagram, does not have a dedicated minor safety act, though they mention disallowing sexual or violent content. In terms of self-injury, all four platforms ban the encouragement or glorification of acts of self-harm and disordered eating.

Given the vast amount of content posted online each day, the chance of every single post being reviewed under the community guidelines is impossible, so there are still posts that violate these guidelines. Numerous examples on different platforms have arisen over the last five years of dangerous content influencing others to follow the original poster’s actions, which has caused incidents with serious consequences to occur.

Eating Disorder Content on Social Media

In 2012, mass criticism began to attack Tumblr due to the large number of ‘thinspiration’ blogs active on the platform, specifically many that promoted and glorified restrictive eating disorders as a rational method to lose weight, claiming that they were lifestyle choices rather than diseases (Gerrard, 2018).

Instagram and Pinterest were also dragged into this debate, and all three platforms began to moderate this content through hashtag moderation, blocking results to certain hashtag searches; according to Gerrard (2018), ‘Hashtags are perhaps the most visible form of social media communication, making them vulnerable to platforms’ interventions’. Although this method worked to a certain degree, the algorithm used by social media platforms to recommend new content was extremely strong, so despite the restrictions on hashtagged media, pro-eating disorder posts still appeared on a user’s feed. Gerrard (2018) suggests that ‘This form of moderation appears to be designed to protect new users who are at risk of joining pro-ED and other such networks rather than those who are already embedded within them.’

Current Impacts of Pro-ED Content

Even with this controversy in 2012, a new surge of pro-eating disorder media has arisen online through TikTok, causing a younger generation to develop inappropriate eating habits (Rando-Cueto et al., 2023). These accounts label themselves as ‘weight-loss’ accounts with dangerously low goal weights in their profiles and often upload ‘what I eat in a day’ videos with extremely little amounts of food, as well as images of underweight female bodies to serve as motivation to stick to their regimen.

This surge is commonly seen under the label ‘#wonyoungism’, referring to K-Pop idol Jang Wonyoung who is known for her extremely slim shape, and her photos are often used in the aforementioned motivation carousels; generally, these accounts are widespread in the K-Pop community online, which is used to seeing underweight body types and idealises them as a goal. Despite the clear glorification of eating disorders, many of these accounts bypass the community guidelines, writing ‘Not Promoting’ in their profiles when pertaining to eating disorders. Hobbs et al. (2021) notes that ‘Users tweak hashtag spellings or texts in videos, such as writing d1s0rder instead of disorder. And innocent-sounding hashtags, such as #recovery, sometimes direct users to videos idealizing life-threatening thinness.’

Multiple news articles have been written about this issue, with Milmo (2023) discussing a letter written by the Molly Rose Foundation and the National Society for the Prevention of Cruelty to Children (NSPCC) that demanded TikTok to more comprehensively moderate this type of content. According to a spokesperson, the platform removed all media mentioned in the report but has taken no further steps for moderation.

The rapidly growing and shifting nature of online communities means that platforms can never catch every nuanced version of pro-eating disorder content, and there will always be thriving communities present on the app. Although hashtag moderation assisted with the reduction of available content to new users, the situation becomes more complicated when different online communities intersect; in this case, the pro-eating disorder community and the K-Pop community. Banning all tags relating to Jang Wonyoung is unfair to those who wish to post content of her without mention of any eating habits, going against the freedom of expression that defines the Web 2.0 era. Research must be conducted into new ways of moderation that are able to keep non-violative content on the app while allowing regulation-abiding posts to remain.

Instructional Videos on Social Media

Another example of social media posts that have proven harmful to viewers is food-related hacks posted onto YouTube. As reported by numerous news articles in 2019, after using popular Chinese YouTuber Ms Yeah’s video as instructional, two Chinese girls (12 and 14) suffered heavy injuries due to burns, resulting in the teenager’s death [BBC News (2019), Bostock (2019), Zhang (2019)]. How To Cook That (2019), a YouTube channel run by Ann Reardon, qualified food scientist and dietician, goes into depth on this matter, additionally attacking ‘food hack’ channels on YouTube, such as 5 Minute Crafts or Blossom, that post unsafe food practices including dipping strawberries into bleach and careless handling of lava hot caramel; this raises the debate, if a viewer is harmed following these videos, who does the responsibility lie on: the platform or the viewer?

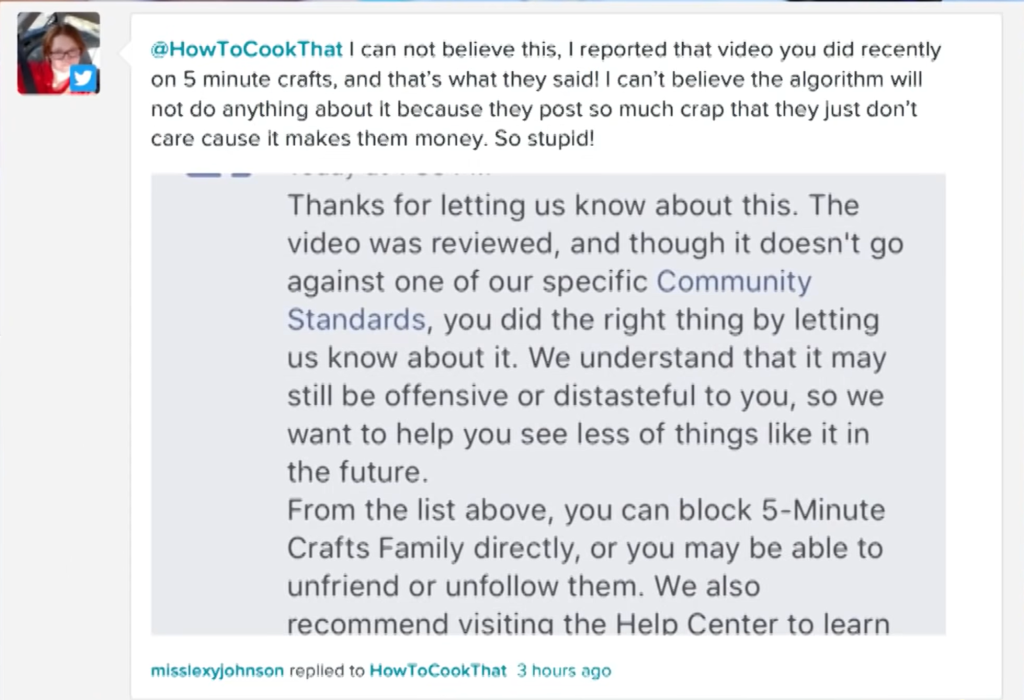

In the case of videos with misinformation, according to YouTube’s Harmful or dangerous content policy, they will remove videos that ‘encourage dangerous or illegal activities that risk serious physical harm or death’ (YouTube Help, 2019). Although this should include unsafe food videos, looking further into the policy, all the examples listed pertain to physical acts performed in videos reproducible by others, i.e. acts that directly harm others, rather than any acts that have the potential to harm others. In the previously mentioned How To Cook That (2019) video, she shows screenshots of users reporting one certain dangerous video and YouTube’s response, which claims that it does not go against any community guidelines, and, due to the nature and description of these guidelines, may be true in their eyes.

Without explicit labels warning users against copying these videos, there is a high chance that they could cause harm to others; especially with ‘food hack’ channels, which provide instructions on how to copy the performed act. In this case, the best way of prevention is for them to be removed. However, this approach clearly has not worked, so another method that could help is demonetising posts; without any profit, channels are much less likely to make unsafe videos (Ma & Kou, 2022), and although the risk is never zero, the platform can be commended for taking action against them.

Concluding Remarks

The aforementioned examples prove the need for stricter and more comprehensive content moderation on all social media platforms, but especially those with a high youth viewership percentage, as this demographic is the most likely to follow instructions given or deeds performed by others. Harmful activities will always be performed online, but with preventative measures taken by the platforms, the risk of causing significant damage to users is decreased greatly.

Reference List

BBC News. (2019, September 20). YouTuber pays compensation after ‘copycat’ death. https://www.bbc.com/news/49765176

Bostock, B. (2019, September 20). A teenager died after being severely burnt when she reportedly copied a video of a popular Chinese YouTuber cooking popcorn in a soda can. Insider. https://www.insider.com/chinese-teen-dies-after-reportedly-copying-viral-cooking-video-2019-9

Gerrard, Y. (2018). Beyond the hashtag: Circumventing content moderation on social media. New Media & Society, 20(12). 4415-4838. https://doi.org/10.1177/1461444818776611

Hobbs, T. D., Barry, R. & Koh, Y. (2021, December 17). ‘The Corpse Bride Diet’: How TikTok Inundates Teens With Eating-Disorder Videos. The Wall Street Journal. https://www.house.mn.gov/comm/docs/w8cOnyZ3ZUKtRrspUZ0NFg.pdf

How To Cook That. (2019, October 4). Exposing Dangerous how-to videos 5-Minute Crafts & So Yummy | How To Cook That Ann Reardon. [Video]. YouTube. https://www.youtube.com/watch?v=CEQaYdvs478

Langlois, G., Elmer, G., Fenwick, M. & Devereaux, Z. (2009). Networked Publics: The Double Articulation of Code and Politics on Facebook. Canadian Journal of Communication, 34(3). 415-434. http://dx.doi.org/10.22230/cjc.2009v34n3a2114

Ma, R. & Kou, Y. (2022). “I am not a YouTuber who can make whatever video I want. I have to keep appeasing algorithms”: Bureaucracy of Creator Moderation on YouTube. CSCW’22 Companion: Companion Publication of the 2022 Conference on Computer Supported Cooperative Work and Social Computing. 8-13. https://doi.org/10.1145/3500868.3559445

Milmo, D. (2023, March 4). TikTok ‘acting too slow’ to tackle self-harm and eating disorder content. The Guardian. https://www.theguardian.com/technology/2023/mar/03/tiktok-too-slow-tackle-self-harm-eating-disorder-content

Rando-Cueto, D., de las Heras-Pedrosa, C. & Paniagua-Rojano, F. J. (2023). Health Communication Strategies via TikTok for the Prevention of Eating Disorders. Systems, 11(6). 274. https://doi.org/10.3390/systems11060274

West, S. M. (2018). Censored, suspended, shadowbanned: User interpretations of content moderation on social media platforms. New Media & Society, 20(11). 3961-4412. https://doi.org/10.1177/1461444818773059

Wong, B. (2023, May 18). Top Social Media Statistics And Trends Of 2023. Forbes. https://www.forbes.com/advisor/business/social-media-statistics/

YouTube Help. (2019). Harmful or dangerous content policy. https://support.google.com/youtube/answer/2801964?hl=en&ref_topic=9282436#zippy=%2Charmful-or-dangerous-acts%2Cdangerous-or-threatening-pranks%2Cextremely-dangerous-challenges

Zhang, P. (2019, September 19). Chinese internet chef pays compensation to family of girl killed ‘imitating popcorn trick’. South China Morning Post. https://www.scmp.com/news/china/society/article/3028083/chinese-internet-chef-pays-compensation-family-girl-killed