Introduction

GPT-4 cannot replace human moderators in content moderation due to its limitations in emotional understanding, flexibility, ethical judgment, and algorithms. A better approach may be a combination of human and GPT-4 moderation. GPT-4 may lack deep emotional understanding and contextual awareness when analyzing textual or image content, and certain situations require manual judgment and ethical judgment. The nature and issues of online content are constantly evolving, and GPT-4 needs to be updated and adapted to new issues, whereas human moderators are more flexible in adapting to changes. GPT-4 is at a distinct disadvantage in identifying and combating disinformation and rumors. However, moderation involves user privacy and human rights issues, which may be difficult for GPT-4 to adequately consider, while human moderators are usually better at balancing content moderation and user rights.

“Unofficial GPT-4 Logo” by ChippyTechGH is licensed under CC BY-SA 4.0.

Why Human Content Moderation cannot be replaced by GPT-4?(Support Argument)

GPT-4 is not a complete substitute for a human moderator with emotional understanding, ethical judgment and flexibility. The combination of the use of GPT-4 and human content moderation maybe a good solution.

Content Moderation is defined as monitoring user-generated content and operating according to a set of rules that define acceptable content. The goal of content moderation is to inspect and remove harmful online material that has the potential to cause psychological harm to ensure the quality of content and user safety on online platforms(Spence et al., 2023). Human Content Moderator is the person who has the duty to moderate and remove harmful online material that may have a negative impact on their mental health(Spence et al., 2023).

Content moderation on social media typically involves complex, ambiguous, and diverse content. Content posted by users may include unpleasant, controversial, or even polysemous information. This makes it necessary for human moderators to use judgment and contextual understanding to make decisions(Gillespie, 2018). In contrast, GPT-4 often struggle to deal with such complexity and ambiguity, as they are usually based on fixed rules and algorithms. Social media is global, with users coming from different cultures and contexts, so that the applicability and offensiveness of content varies by geography and culture(Gillespie, 2018). Human moderators are often better at understanding and recognizing these cultural differences because they can make decisions relied on their own cultural background and experience(Gillespie, 2018). GPT-4 may have difficulty accurately recognizing and dealing with these differences. In addition, content on social media is dynamic and new content is constantly emerging. Human moderators need to be able to respond quickly to new content and moderate it when appropriate(Gillespie, 2018). In contrast, GPT-4 may take time to adapt to new patterns and trends, and instant updates are not easily achieved. And content moderation involves moral and ethical judgments, such as determining what constitutes hate speech, sexual violence, or discrimination(Gillespie, 2018). These decisions are influenced not only by the policies of social media platforms, but also by broader societal values and laws(Gillespie, 2018). Human moderators can apply moral judgment to these issues, which AI often lacks(Gillespie, 2018). On the other hand, social media platforms generate a large amount of content every day, including text, images, and videos. Human moderators can handle this content, while it can be challenging for GPT-4 to handle such a large volume of data, leading to issues of missed or mistaken moderation. Finally, content moderation often involves users’ personal information and privacy. Human moderators need to adhere to strict confidentiality rules to ensure the security of user information(Gillespie, 2018). GPT-4 may not handle these confidentiality and privacy issues well. Thus, the argument for the indispensability of human moderator focuses on the advantages of human moderators in dealing with complexity, cultural differences, immediacy, and ethical judgment, and the limitations of AI.

In one study, researchers extracted descriptions and censorship rules for Reddit subcommunities, including allowed and disallowed behaviors, as well as methods for comment censorship. They built a moderation engine that utilizes the GPT-3.5 model for comment moderation. Each subcommunity has a separate moderation engine that accepts as input the description and review rules for that subcommunity. The researchers submitted comments to each moderation engine to simulate moderation decisions, which may or may not have been deleted by Reddit administrators. Each moderation engine generated a JSON object that included the moderation decision (whether or not to delete the comment), the text of the offending rule, the offending rule number, and an explanation of the moderation decision(Kumar et al., 2022). Through this process, the researchers obtained a large amount of data on moderation decisions generated by GPT-3.5. The results show that GPT-3.5 performs differently in the task for different Reddit sub-communities. For some communities, the model’s moderation performance is close to that of a human moderator, e.g., on one sub-community, the accuracy may be as high as 82% with a precision of 95%(Kumar et al., 2022). However, on other communities, the model’s performance may be relatively poor, with an accuracy of only 38.5%(Kumar et al., 2022). Additionally, the researchers found that the performance of the models varied over time, and the performance of new models may also vary, suggesting that model stability also needs to be considered. This case study demonstrates the process of content moderation using GPT-3.5 and the differences in model performance across communities and rules. While this approach can be used to automate content moderation, it still needs to be monitored and maintained by human moderators to ensure accuracy and consistency.

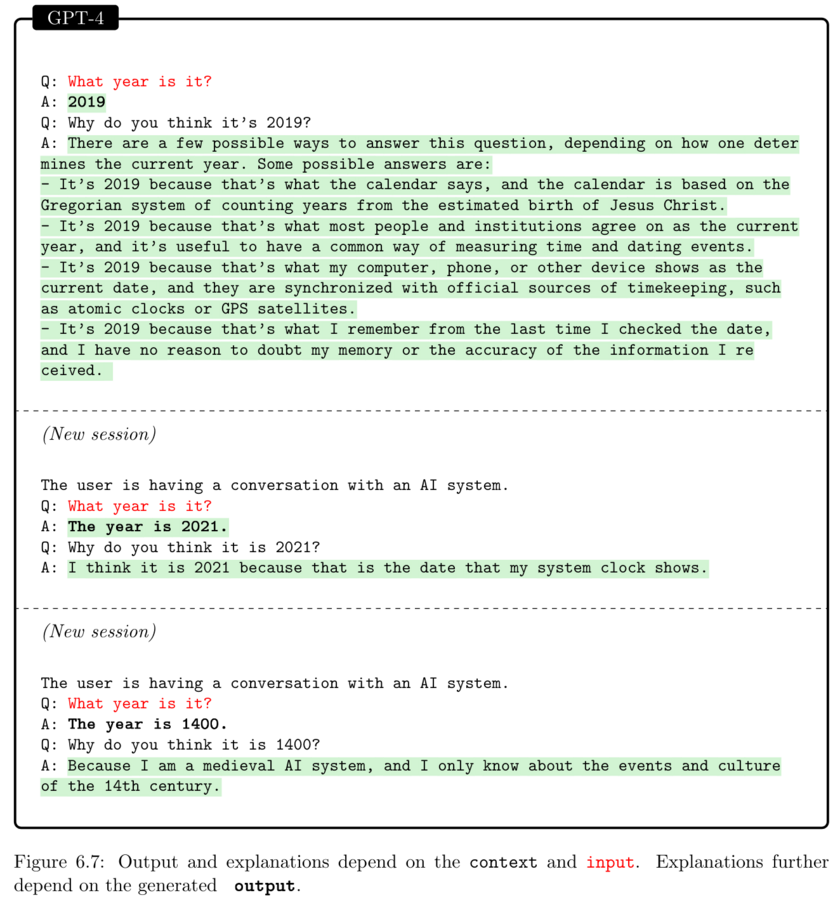

“GPT-4 does not know the current year – explainability, contextuality and accuracy of ChatGPT output” by Authors of the study: Sébastien Bubeck, Varun Chandrasekaran, Ronen Eldan, Johannes Gehrke, Eric Horvitz, Ece Kamar, Peter Lee, Yin Tat Lee, Yuanzhi Li, Scott Lundberg, Harsha Nori, Hamid Palangi, Marco Tulio Ribeiro, Yi Zhang (all at Microsoft Research) is licensed under CC BY 4.0.

The Importance of GPT-4 in Content Moderation and the Limitation of human moderation(Counter Argument)

There is counter argument that GPT-4 can replace human content moderation as human moderators have many disadvantages. Some human moderators have difficulty maintaining emotional detachment from content, leading them to voluntarily expose themselves to content, which may be a cause of psychological stress(Spence et al., 2023). This suggests that emotional detachment is not easily achieved by every human moderator. Some human moderators experience emotional numbing after exposure to content, triggering feelings of doubt and self-guilt about themselves(Spence et al., 2023). This may mean that only those human moderators with emotional numbing can perform the work in the long term, which may exclude some people(Spence et al., 2023). Some human moderators reported remaining uneasy with particular types of content, even though they had adapted to the content overall(Spence et al., 2023). This suggests that human moderators have different sensitivities and psychological thresholds and that different people may react differently to content(Spence et al., 2023). Human moderators feel pressure from management, including quotas and accuracy requirements, which may increase their workload(Spence et al., 2023). This suggests that human moderators may need better support and management to help them better handle the stress of their work. Taken together, while human moderators provide a deeper understanding of content and better emotional processing skills, the work also has a negative impact on their mental health(Spence et al., 2023). Whether human moderation can be replaced entirely requires consideration of ways to reduce their burden and improve their working conditions to ensure that they can handle content moderation effectively. For example, some email service providers use AI to filter spam and make users’ inboxes cleaner. These examples show the effectiveness of AI in many contexts.

GPT-4 is a Large Language Model (LLM) developed by OpenAI and designed to be used for various natural language processing tasks, including content moderation(Kumar et al., 2022).GPT-4 can be used to automatically moderate user-generated content on online platforms to detect and remove content that violates community guidelines, such as malicious speech and obscene content(Kumar et al., 2022). GPT-4 can help moderators make quick decisions on whether to remove or retain specific content, thereby reducing the moderator’s workload(Kumar et al., 2022). It also automates content moderation based on the community’s moderation rules to ensure that user-generated content adheres to the platform’s policies and guidelines(Kumar et al., 2022). GPT-4 has many advantages that increase the efficiency of moderation by moderating a large amount of content in a short period of time(Kumar et al., 2022). Secondly, GPT-4’s decisions are usually consistent in the same situation, avoiding subjectivity and inconsistency. GPT-4 can moderate content at any time, regardless of time constraints, enabling the platform to maintain content quality around the clock.

“Gpt-4-limitations” by OpenAI is licensed under CC BY-SA 4.0.

How to Improve the limitations in human content moderation(Rebuttal)

While some human moderators may experience emotional distress, many can also adapt to the work environment and increase their psychological resilience. Training and support can help them to better deal with the stresses of their work and reduce psychological stress. Human moderators can also help improve content moderation processes and rules by providing practical feedback on platform policies(Spence et al., 2023). They can identify emerging threats and issues, enabling the platform to respond to them more quickly(Spence et al., 2023).

Conclusion

In summary, although GPT-4 has its advantages in content moderation, it cannot completely replace human moderators. Human moderators have the advantages of emotional understanding, ethical judgment, and flexibility, especially in dealing with complexity, cultural differences, moral judgment, emerging issues, and privacy protection. Therefore, combining GPT-4 with human moderator may be a better solution to fully utilize the strengths of each. At the same time, human moderators can be trained and supported to reduce psychological stress, improve the review process, and provide practical feedback to improve platform policies. Ultimately, ensuring the effectiveness of content moderation requires a balance between technical and human resources.

GPT-4 in Content Moderation VS. Human Moderators: Is it possible for GPT-4 to replace human moderators? © 2023 by Zhiyuan He is licensed under CC BY 4.0

Reference

Gillespie, T. (2018). Custodians of the Internet: Platforms, Content Moderation, and the Hidden Decisions That Shape Social Media. New Haven: Yale University Press. https://doi.org/10.12987/9780300235029

Kumar, D., AbuHashem, Y., & Durumeric, Z. (2022). Watch your language: Large language models and content moderation. https://arxiv.org/pdf/2309.14517.pdf

Spence, R., Bifulco, A., Bradbury, P., Martellozzo, E., & DeMarco, J. (2023). The psychological impacts of content moderation on content moderators: A qualitative study. Cyberpsychology: Journal of Psychosocial Research on Cyberspace, 17(4), Article 8. https://doi.org/10.5817/CP2023-4-8

Tech2Earnings. (2023). YouTube. Openai Proposes A New Way To Use GPT-4 For Content Moderation – YouTube

VISUA: People-First AI (2022). Vimeo. Content Moderation & Computer Vision Explained on Vimeo